Upload folder using huggingface_hub (#2)

Browse files- 7384a6f1c155879258ba38f468a36cb2a83c2161718f4e413712eedbca767b47 (4aac3b0c989eeb5b17cbb3bf23a6946676568d06)

- c6e84918101c8f288eb77f9f4b6fb81544504d6cc0b2f9b32df3bea3968a55ac (160d9c111f5a1b7a09728f7f7714c40ccb6a6fcc)

- config.json +1 -1

- plots.png +0 -0

- smash_config.json +1 -1

config.json

CHANGED

|

@@ -1,5 +1,5 @@

|

|

| 1 |

{

|

| 2 |

-

"_name_or_path": "/tmp/

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

|

|

|

| 1 |

{

|

| 2 |

+

"_name_or_path": "/tmp/tmp020rfadf",

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

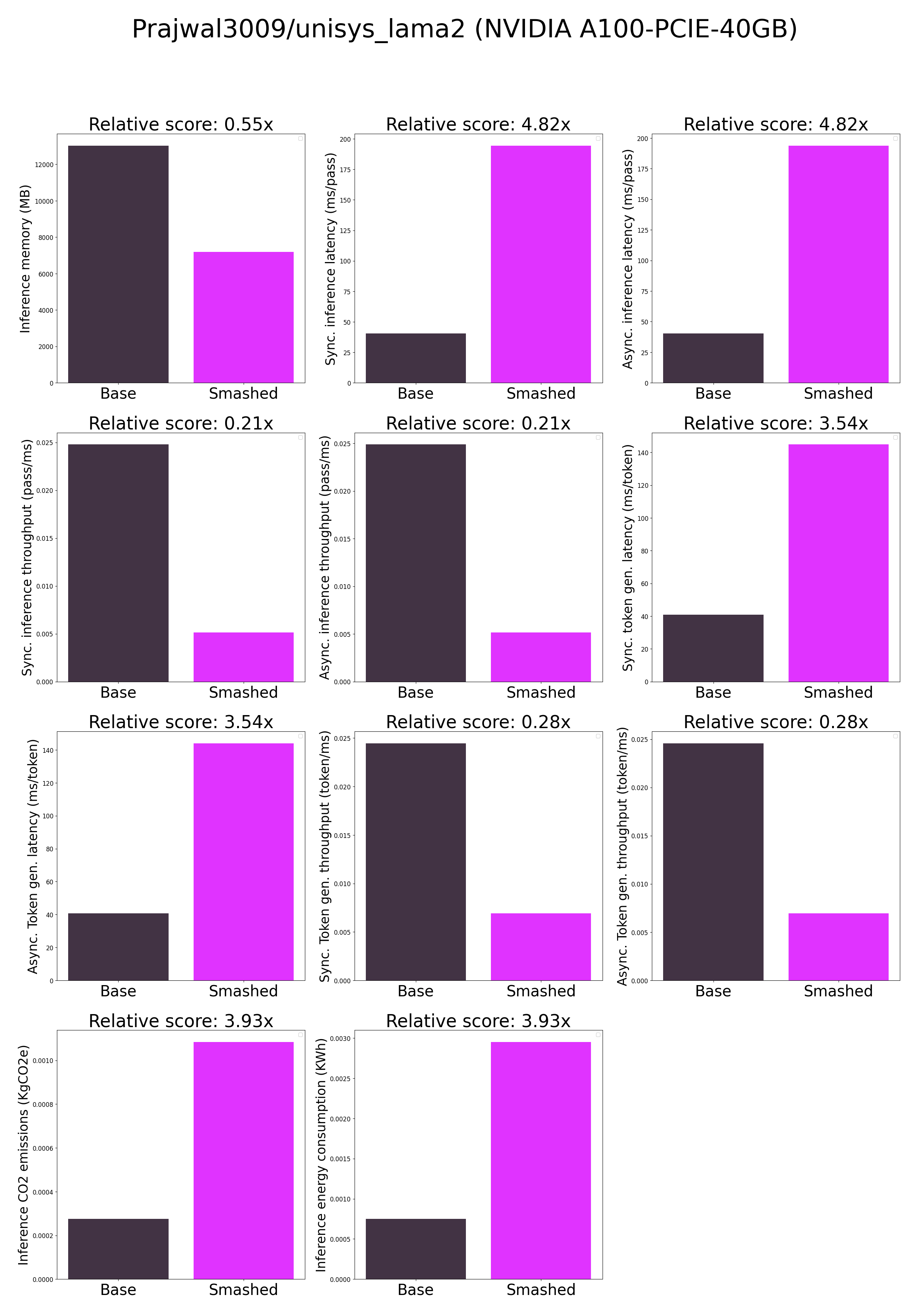

plots.png

CHANGED

|

|

smash_config.json

CHANGED

|

@@ -8,7 +8,7 @@

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "Prajwal3009/unisys_lama2",

|

| 14 |

"pruning_ratio": 0.0,

|

|

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/modelsl43d99dw",

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "Prajwal3009/unisys_lama2",

|

| 14 |

"pruning_ratio": 0.0,

|