Commit

•

7063f51

1

Parent(s):

785d1f8

- README.md +94 -0

- config.json +69 -0

- preprocessor_config.json +7 -0

- pytorch_model.bin +3 -0

README.md

ADDED

|

@@ -0,0 +1,94 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

tags:

|

| 4 |

+

- vision

|

| 5 |

+

- depth-estimation

|

| 6 |

+

widget:

|

| 7 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/tiger.jpg

|

| 8 |

+

example_title: Tiger

|

| 9 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/teapot.jpg

|

| 10 |

+

example_title: Teapot

|

| 11 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/palace.jpg

|

| 12 |

+

example_title: Palace

|

| 13 |

+

---

|

| 14 |

+

|

| 15 |

+

# GLPN fine-tuned on NYUv2

|

| 16 |

+

|

| 17 |

+

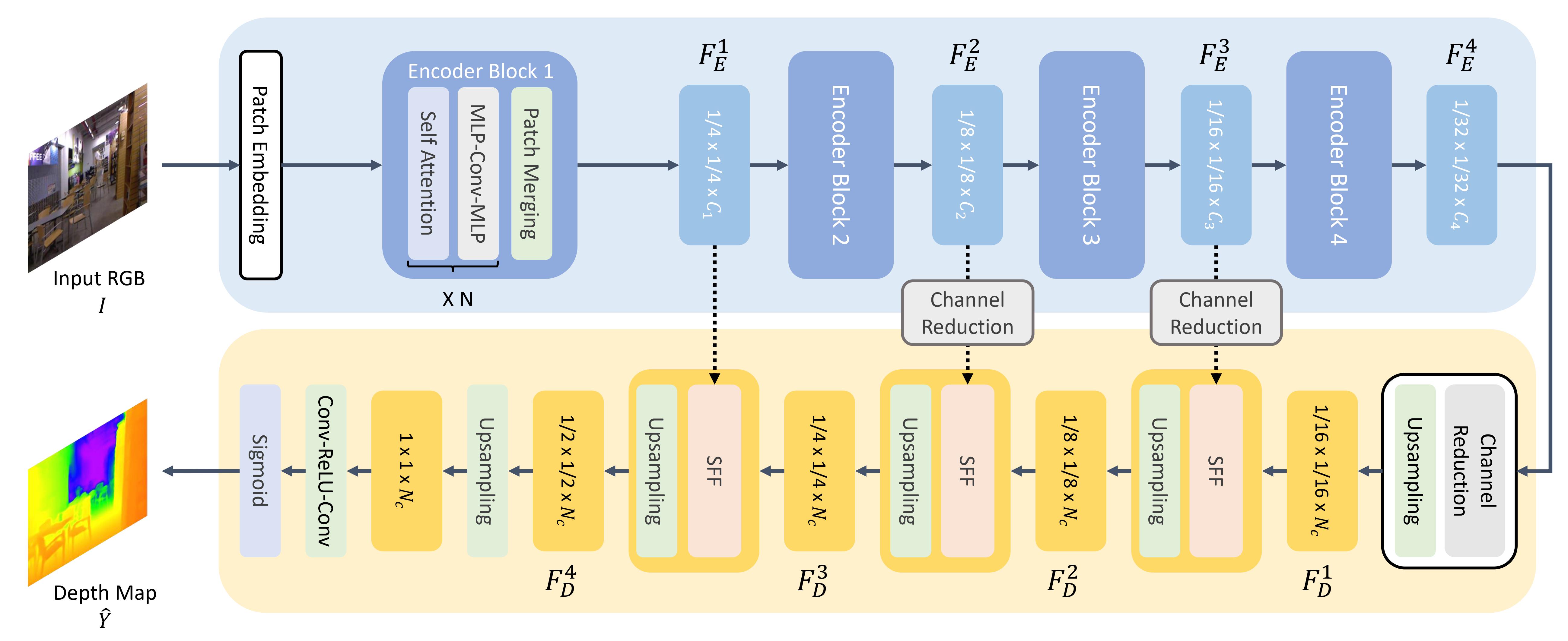

Global-Local Path Networks (GLPN) model trained on NYUv2 for monocular depth estimation. It was introduced in the paper [Global-Local Path Networks for Monocular Depth Estimation with Vertical CutDepth](https://arxiv.org/abs/2201.07436) by Kim et al. and first released in [this repository](https://github.com/vinvino02/GLPDepth).

|

| 18 |

+

|

| 19 |

+

Disclaimer: The team releasing GLPN did not write a model card for this model so this model card has been written by the Hugging Face team.

|

| 20 |

+

|

| 21 |

+

## Model description

|

| 22 |

+

|

| 23 |

+

GLPN uses SegFormer as backbone and adds a lightweight head on top for depth estimation.

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

## Intended uses & limitations

|

| 28 |

+

|

| 29 |

+

You can use the raw model for monocular depth estimation. See the [model hub](https://huggingface.co/models?search=glpn) to look for

|

| 30 |

+

fine-tuned versions on a task that interests you.

|

| 31 |

+

|

| 32 |

+

### How to use

|

| 33 |

+

|

| 34 |

+

Here is how to use this model:

|

| 35 |

+

|

| 36 |

+

```python

|

| 37 |

+

from transformers import GLPNFeatureExtractor, GLPNForDepthEstimation

|

| 38 |

+

import torch

|

| 39 |

+

import numpy as np

|

| 40 |

+

from PIL import Image

|

| 41 |

+

import requests

|

| 42 |

+

|

| 43 |

+

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

|

| 44 |

+

image = Image.open(requests.get(url, stream=True).raw)

|

| 45 |

+

|

| 46 |

+

feature_extractor = GLPNFeatureExtractor.from_pretrained("vinvino02/glpn-nyu")

|

| 47 |

+

model = GLPNForDepthEstimation.from_pretrained("vinvino02/glpn-nyu")

|

| 48 |

+

|

| 49 |

+

# prepare image for the model

|

| 50 |

+

inputs = feature_extractor(images=image, return_tensors="pt")

|

| 51 |

+

|

| 52 |

+

with torch.no_grad():

|

| 53 |

+

outputs = model(**inputs)

|

| 54 |

+

predicted_depth = outputs.predicted_depth

|

| 55 |

+

|

| 56 |

+

# interpolate to original size

|

| 57 |

+

prediction = torch.nn.functional.interpolate(

|

| 58 |

+

predicted_depth.unsqueeze(1),

|

| 59 |

+

size=image.size[::-1],

|

| 60 |

+

mode="bicubic",

|

| 61 |

+

align_corners=False,

|

| 62 |

+

)

|

| 63 |

+

|

| 64 |

+

# visualize the prediction

|

| 65 |

+

output = prediction.squeeze().cpu().numpy()

|

| 66 |

+

formatted = (output * 255 / np.max(output)).astype("uint8")

|

| 67 |

+

depth = Image.fromarray(formatted)

|

| 68 |

+

```

|

| 69 |

+

|

| 70 |

+

For more code examples, we refer to the [documentation](https://huggingface.co/docs/transformers/master/en/model_doc/glpn).

|

| 71 |

+

|

| 72 |

+

### BibTeX entry and citation info

|

| 73 |

+

|

| 74 |

+

```bibtex

|

| 75 |

+

@article{DBLP:journals/corr/abs-2201-07436,

|

| 76 |

+

author = {Doyeon Kim and

|

| 77 |

+

Woonghyun Ga and

|

| 78 |

+

Pyunghwan Ahn and

|

| 79 |

+

Donggyu Joo and

|

| 80 |

+

Sehwan Chun and

|

| 81 |

+

Junmo Kim},

|

| 82 |

+

title = {Global-Local Path Networks for Monocular Depth Estimation with Vertical

|

| 83 |

+

CutDepth},

|

| 84 |

+

journal = {CoRR},

|

| 85 |

+

volume = {abs/2201.07436},

|

| 86 |

+

year = {2022},

|

| 87 |

+

url = {https://arxiv.org/abs/2201.07436},

|

| 88 |

+

eprinttype = {arXiv},

|

| 89 |

+

eprint = {2201.07436},

|

| 90 |

+

timestamp = {Fri, 21 Jan 2022 13:57:15 +0100},

|

| 91 |

+

biburl = {https://dblp.org/rec/journals/corr/abs-2201-07436.bib},

|

| 92 |

+

bibsource = {dblp computer science bibliography, https://dblp.org}

|

| 93 |

+

}

|

| 94 |

+

```

|

config.json

ADDED

|

@@ -0,0 +1,69 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"GLPNForDepthEstimation"

|

| 4 |

+

],

|

| 5 |

+

"attention_probs_dropout_prob": 0.0,

|

| 6 |

+

"classifier_dropout_prob": 0.1,

|

| 7 |

+

"decoder_hidden_size": 64,

|

| 8 |

+

"depths": [

|

| 9 |

+

3,

|

| 10 |

+

8,

|

| 11 |

+

27,

|

| 12 |

+

3

|

| 13 |

+

],

|

| 14 |

+

"downsampling_rates": [

|

| 15 |

+

1,

|

| 16 |

+

4,

|

| 17 |

+

8,

|

| 18 |

+

16

|

| 19 |

+

],

|

| 20 |

+

"drop_path_rate": 0.1,

|

| 21 |

+

"head_in_index": -1,

|

| 22 |

+

"hidden_act": "gelu",

|

| 23 |

+

"hidden_dropout_prob": 0.0,

|

| 24 |

+

"hidden_sizes": [

|

| 25 |

+

64,

|

| 26 |

+

128,

|

| 27 |

+

320,

|

| 28 |

+

512

|

| 29 |

+

],

|

| 30 |

+

"image_size": 224,

|

| 31 |

+

"initializer_range": 0.02,

|

| 32 |

+

"layer_norm_eps": 1e-06,

|

| 33 |

+

"max_depth": 10,

|

| 34 |

+

"mlp_ratios": [

|

| 35 |

+

4,

|

| 36 |

+

4,

|

| 37 |

+

4,

|

| 38 |

+

4

|

| 39 |

+

],

|

| 40 |

+

"model_type": "glpn",

|

| 41 |

+

"num_attention_heads": [

|

| 42 |

+

1,

|

| 43 |

+

2,

|

| 44 |

+

5,

|

| 45 |

+

8

|

| 46 |

+

],

|

| 47 |

+

"num_channels": 3,

|

| 48 |

+

"num_encoder_blocks": 4,

|

| 49 |

+

"patch_sizes": [

|

| 50 |

+

7,

|

| 51 |

+

3,

|

| 52 |

+

3,

|

| 53 |

+

3

|

| 54 |

+

],

|

| 55 |

+

"sr_ratios": [

|

| 56 |

+

8,

|

| 57 |

+

4,

|

| 58 |

+

2,

|

| 59 |

+

1

|

| 60 |

+

],

|

| 61 |

+

"strides": [

|

| 62 |

+

4,

|

| 63 |

+

2,

|

| 64 |

+

2,

|

| 65 |

+

2

|

| 66 |

+

],

|

| 67 |

+

"torch_dtype": "float32",

|

| 68 |

+

"transformers_version": "4.18.0.dev0"

|

| 69 |

+

}

|

preprocessor_config.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_rescale": true,

|

| 3 |

+

"do_resize": true,

|

| 4 |

+

"feature_extractor_type": "GLPNFeatureExtractor",

|

| 5 |

+

"resample": 2,

|

| 6 |

+

"size_divisor": 32

|

| 7 |

+

}

|

pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c353b12e5f4e5fafd0053d1c19bf60dfb1562b74db4728c6920a2cf44782b603

|

| 3 |

+

size 245258793

|