Seq vs Seq: An Open Suite of Paired Encoders and Decoders

Abstract

The large language model (LLM) community focuses almost exclusively on decoder-only language models, since they are easier to use for text generation. However, a large subset of the community still uses encoder-only models for tasks such as classification or retrieval. Previous work has attempted to compare these architectures, but is forced to make comparisons with models that have different numbers of parameters, training techniques, and datasets. We introduce the SOTA open-data Ettin suite of models: paired encoder-only and decoder-only models ranging from 17 million parameters to 1 billion, trained on up to 2 trillion tokens. Using the same recipe for both encoder-only and decoder-only models produces SOTA recipes in both categories for their respective sizes, beating ModernBERT as an encoder and Llama 3.2 and SmolLM2 as decoders. Like previous work, we find that encoder-only models excel at classification and retrieval tasks while decoders excel at generative tasks. However, we show that adapting a decoder model to encoder tasks (and vice versa) through continued training is subpar compared to using only the reverse objective (i.e. a 400M encoder outperforms a 1B decoder on MNLI, and vice versa for generative tasks). We open-source all artifacts of this study including training data, training order segmented by checkpoint, and 200+ checkpoints to allow future work to analyze or extend all aspects of training.

Community

Unfortunately, results on token classification are missing, but don't worry, I will run them soon 😅

Guys please:

This is not an AGI model that needs "additional safety tests and review high-risk areas" (quote from our famous "Open"AI).

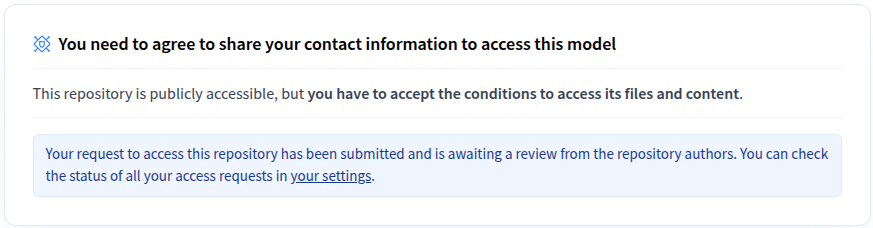

Hey,

Sorry about that. I believe

@orionweller

fixed it (it was before the official release ahah)!

Can you check now?

Ah, sorry @stefan-it you were early and they were gated pre-release. They should all be open now :) Looking forward to seeing token-level results!