Spaces:

Runtime error

Runtime error

Commit

·

058960f

1

Parent(s):

3f5c88f

Neural Style Transfer using PaddleHub model and Clip.

Browse filesClip is used to get an image for content based on text input.

- .gitattributes +1 -0

- .gitignore +1 -0

- README.md +4 -4

- app.py +91 -0

- packages.txt +3 -0

- requirements.txt +4 -0

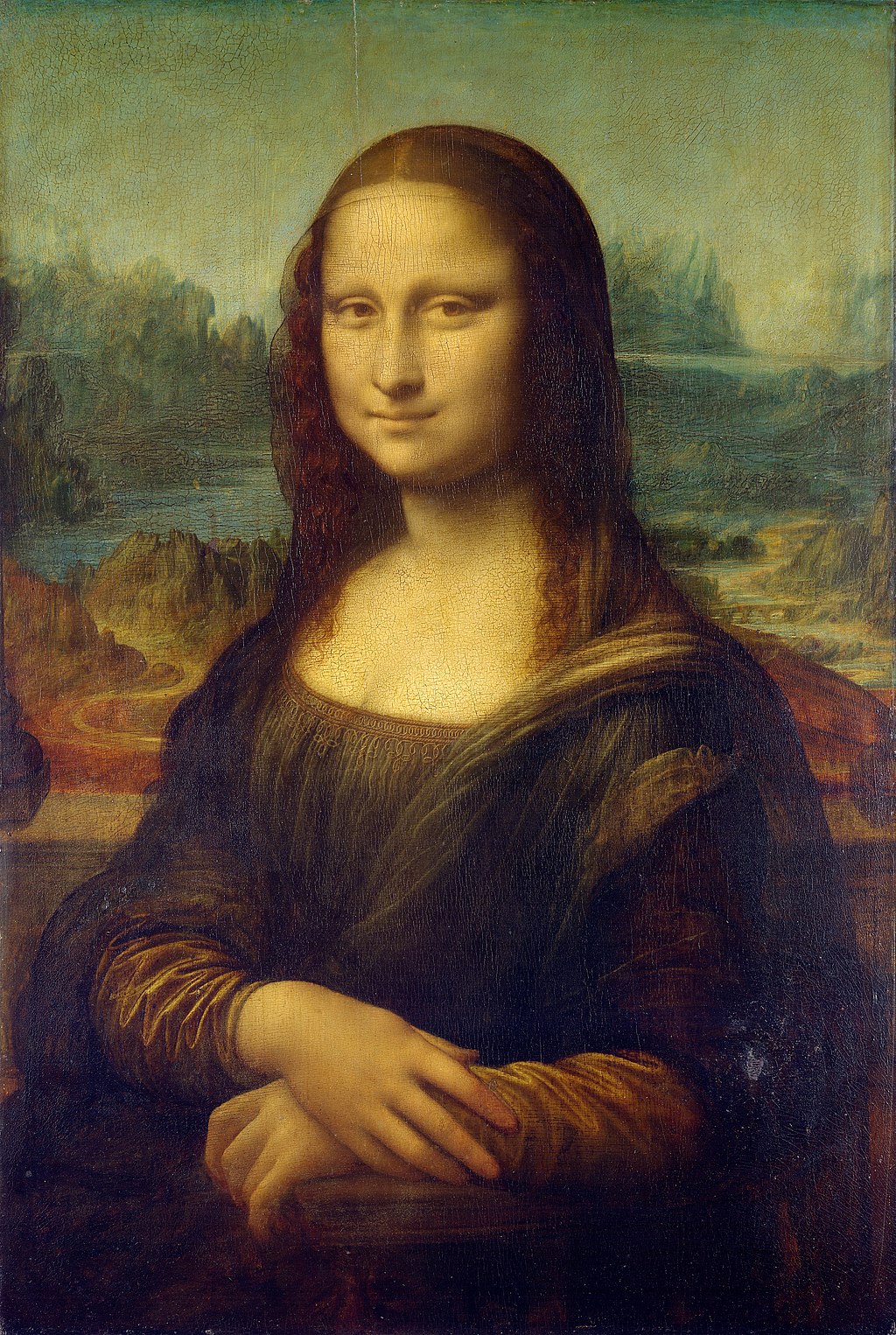

- styles/mona1.jpeg +0 -0

- styles/starry.jpeg +0 -0

- unsplash-dataset/features.npy +3 -0

- unsplash-dataset/photo_ids.csv +0 -0

- unsplash-dataset/photos.tsv000 +0 -0

.gitattributes

CHANGED

|

@@ -25,3 +25,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 25 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 26 |

*.zstandard filter=lfs diff=lfs merge=lfs -text

|

| 27 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 25 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 26 |

*.zstandard filter=lfs diff=lfs merge=lfs -text

|

| 27 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

unsplash-dataset/features.npy filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

gradio_queue.db

|

README.md

CHANGED

|

@@ -1,11 +1,11 @@

|

|

| 1 |

---

|

| 2 |

title: Neural Style Transfer

|

| 3 |

-

emoji:

|

| 4 |

-

colorFrom:

|

| 5 |

-

colorTo:

|

| 6 |

sdk: gradio

|

| 7 |

app_file: app.py

|

| 8 |

-

pinned:

|

| 9 |

---

|

| 10 |

|

| 11 |

# Configuration

|

|

|

|

| 1 |

---

|

| 2 |

title: Neural Style Transfer

|

| 3 |

+

emoji: 🔥

|

| 4 |

+

colorFrom: pink

|

| 5 |

+

colorTo: yellow

|

| 6 |

sdk: gradio

|

| 7 |

app_file: app.py

|

| 8 |

+

pinned: true

|

| 9 |

---

|

| 10 |

|

| 11 |

# Configuration

|

app.py

ADDED

|

@@ -0,0 +1,91 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

from io import BytesIO

|

| 3 |

+

import requests

|

| 4 |

+

|

| 5 |

+

# Interface utilities

|

| 6 |

+

import gradio as gr

|

| 7 |

+

|

| 8 |

+

# Data utilities

|

| 9 |

+

import numpy as np

|

| 10 |

+

import pandas as pd

|

| 11 |

+

|

| 12 |

+

# Image utilities

|

| 13 |

+

from PIL import Image

|

| 14 |

+

import cv2

|

| 15 |

+

|

| 16 |

+

# Clip Model

|

| 17 |

+

import torch

|

| 18 |

+

from transformers import CLIPTokenizer, CLIPModel

|

| 19 |

+

|

| 20 |

+

# Style Transfer Model

|

| 21 |

+

import paddlehub as hub

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

os.system("hub install stylepro_artistic==1.0.1")

|

| 26 |

+

stylepro_artistic = hub.Module(name="stylepro_artistic")

|

| 27 |

+

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

# Clip Model

|

| 31 |

+

device = "cuda" if torch.cuda.is_available() else "cpu"

|

| 32 |

+

model = CLIPModel.from_pretrained("openai/clip-vit-base-patch32")

|

| 33 |

+

tokenizer = CLIPTokenizer.from_pretrained("openai/clip-vit-base-patch32")

|

| 34 |

+

model = model.to(device)

|

| 35 |

+

|

| 36 |

+

# Load Data

|

| 37 |

+

photos = pd.read_csv("unsplash-dataset/photos.tsv000", sep="\t", header=0)

|

| 38 |

+

photo_features = np.load("unsplash-dataset/features.npy")

|

| 39 |

+

photo_ids = pd.read_csv("unsplash-dataset/photo_ids.csv")

|

| 40 |

+

photo_ids = list(photo_ids["photo_id"])

|

| 41 |

+

|

| 42 |

+

def image_from_text(text_input):

|

| 43 |

+

## Inference

|

| 44 |

+

with torch.no_grad():

|

| 45 |

+

inputs = tokenizer([text_input], padding=True, return_tensors="pt")

|

| 46 |

+

text_features = model.get_text_features(**inputs).cpu().numpy()

|

| 47 |

+

|

| 48 |

+

## Find similarity

|

| 49 |

+

similarities = list((text_features @ photo_features.T).squeeze(0))

|

| 50 |

+

|

| 51 |

+

## Return best image :)

|

| 52 |

+

idx = sorted(zip(similarities, range(photo_features.shape[0])), key=lambda x: x[0], reverse=True)[0][1]

|

| 53 |

+

photo_id = photo_ids[idx]

|

| 54 |

+

photo_data = photos[photos["photo_id"] == photo_id].iloc[0]

|

| 55 |

+

|

| 56 |

+

# Downlaod image

|

| 57 |

+

response = requests.get(photo_data["photo_image_url"] + "?w=640")

|

| 58 |

+

pil_image = Image.open(BytesIO(response.content)).convert("RGB")

|

| 59 |

+

open_cv_image = np.array(pil_image)

|

| 60 |

+

# Convert RGB to BGR

|

| 61 |

+

open_cv_image = open_cv_image[:, :, ::-1].copy()

|

| 62 |

+

|

| 63 |

+

return open_cv_image

|

| 64 |

+

|

| 65 |

+

def inference(content, style):

|

| 66 |

+

result = stylepro_artistic.style_transfer(

|

| 67 |

+

images=[{

|

| 68 |

+

"content": image_from_text(content),

|

| 69 |

+

"styles": [cv2.imread(style.name)]

|

| 70 |

+

}])

|

| 71 |

+

return Image.fromarray(np.uint8(result[0]["data"])[:,:,::-1]).convert("RGB")

|

| 72 |

+

|

| 73 |

+

title = "Neural Style Transfer"

|

| 74 |

+

description = "Gradio demo for Neural Style Transfer. To use it, simply enter the text for image content and upload style image. Read more at the links below."

|

| 75 |

+

article = "<p style='text-align: center'><a href='https://arxiv.org/abs/2003.07694'target='_blank'>Parameter-Free Style Projection for Arbitrary Style Transfer</a> | <a href='https://github.com/PaddlePaddle/PaddleHub' target='_blank'>Github Repo</a></br><a href='https://arxiv.org/abs/2103.00020'target='_blank'>Clip paper</a> | <a href='https://huggingface.co/transformers/model_doc/clip.html' target='_blank'>Hugging Face Clip Implementation</a></p>"

|

| 76 |

+

examples=[

|

| 77 |

+

["a cute kangaroo", "styles/starry.jpeg"],

|

| 78 |

+

["man holding beer", "styles/mona1.jpeg"],

|

| 79 |

+

]

|

| 80 |

+

interface = gr.Interface(inference,

|

| 81 |

+

inputs=[

|

| 82 |

+

gr.inputs.Textbox(lines=1, placeholder="Describe the content of the image", default="a cute kangaroo", label="Describe the image to which the style will be applied"),

|

| 83 |

+

gr.inputs.Image(type="file", label="Style to be applied"),

|

| 84 |

+

],

|

| 85 |

+

outputs=gr.outputs.Image(type="pil"),

|

| 86 |

+

enable_queue=True,

|

| 87 |

+

title=title,

|

| 88 |

+

description=description,

|

| 89 |

+

article=article,

|

| 90 |

+

examples=examples)

|

| 91 |

+

interface.launch()

|

packages.txt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

ffmpeg

|

| 2 |

+

libsm6

|

| 3 |

+

libxext6

|

requirements.txt

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

paddlepaddle

|

| 2 |

+

paddlehub

|

| 3 |

+

transformers

|

| 4 |

+

torch

|

styles/mona1.jpeg

ADDED

|

styles/starry.jpeg

ADDED

|

unsplash-dataset/features.npy

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:14ef8a96e6b6adae89432ab046909ab600b5793ba47f2c352168696e7eb9dfb0

|

| 3 |

+

size 51191936

|

unsplash-dataset/photo_ids.csv

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

unsplash-dataset/photos.tsv000

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|