Spaces:

Runtime error

Runtime error

Upload 6 files

Browse files- app.py +98 -3

- data/1.png +0 -0

- data/2.png +0 -0

- data/3.png +0 -0

- data/4.png +0 -0

- requirements.txt +6 -0

app.py

CHANGED

|

@@ -1,7 +1,102 @@

|

|

| 1 |

import gradio as gr

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 2 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 3 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 4 |

|

| 5 |

-

#

|

| 6 |

-

|

| 7 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

import gradio as gr

|

| 2 |

+

import numpy as np

|

| 3 |

+

from PIL import Image

|

| 4 |

+

import random

|

| 5 |

+

import matplotlib.pyplot as plt

|

| 6 |

+

import torch

|

| 7 |

+

from transformers import SegformerForSemanticSegmentation

|

| 8 |

+

from transformers import SegformerImageProcessor

|

| 9 |

|

| 10 |

+

image_list = [

|

| 11 |

+

"data/1.png",

|

| 12 |

+

"data/2.png",

|

| 13 |

+

"data/3.png",

|

| 14 |

+

"data/4.png",

|

| 15 |

+

]

|

| 16 |

|

| 17 |

+

def visualize_instance_seg_mask(mask):

|

| 18 |

+

# Initialize image with zeros with the image resolution

|

| 19 |

+

# of the segmentation mask and 3 channels

|

| 20 |

+

image = np.zeros((mask.shape[0], mask.shape[1], 3))

|

| 21 |

|

| 22 |

+

# Create labels

|

| 23 |

+

labels = np.unique(mask)

|

| 24 |

+

label2color = {

|

| 25 |

+

label: (

|

| 26 |

+

random.randint(0, 255),

|

| 27 |

+

random.randint(0, 255),

|

| 28 |

+

random.randint(0, 255),

|

| 29 |

+

)

|

| 30 |

+

for label in labels

|

| 31 |

+

}

|

| 32 |

+

|

| 33 |

+

for height in range(image.shape[0]):

|

| 34 |

+

for width in range(image.shape[1]):

|

| 35 |

+

image[height, width, :] = label2color[mask[height, width]]

|

| 36 |

+

|

| 37 |

+

image = image / 255

|

| 38 |

+

return image

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

def Segformer_Segmentation(image_path, model_id):

|

| 42 |

+

output_save = "output.png"

|

| 43 |

+

|

| 44 |

+

test_image = Image.open(image_path)

|

| 45 |

+

|

| 46 |

+

model = SegformerForSemanticSegmentation.from_pretrained(model_id)

|

| 47 |

+

proccessor = SegformerImageProcessor(model_id)

|

| 48 |

+

|

| 49 |

+

inputs = proccessor(images=test_image, return_tensors="pt")

|

| 50 |

+

with torch.no_grad():

|

| 51 |

+

outputs = model(**inputs)

|

| 52 |

+

|

| 53 |

+

result = proccessor.post_process_semantic_segmentation(outputs)[0]

|

| 54 |

+

result = np.array(result)

|

| 55 |

+

result = visualize_instance_seg_mask(result)

|

| 56 |

+

plt.figure(figsize=(10, 10))

|

| 57 |

+

for plot_index in range(2):

|

| 58 |

+

if plot_index == 0:

|

| 59 |

+

plot_image = test_image

|

| 60 |

+

title = "Original"

|

| 61 |

+

else:

|

| 62 |

+

plot_image = result

|

| 63 |

+

title = "Segmentation"

|

| 64 |

+

|

| 65 |

+

plt.subplot(1, 2, plot_index+1)

|

| 66 |

+

plt.imshow(plot_image)

|

| 67 |

+

plt.title(title)

|

| 68 |

+

plt.axis("off")

|

| 69 |

+

plt.savefig(output_save)

|

| 70 |

+

|

| 71 |

+

return output_save

|

| 72 |

+

|

| 73 |

+

inputs = [

|

| 74 |

+

gr.inputs.Image(type="filepath", label="Input Image"),

|

| 75 |

+

gr.inputs.Dropdown(

|

| 76 |

+

choices=[

|

| 77 |

+

"deprem-ml/deprem_satellite_semantic_whu"

|

| 78 |

+

],

|

| 79 |

+

label="Model ID",

|

| 80 |

+

default="deprem-ml/deprem_satellite_semantic_whu",

|

| 81 |

+

)

|

| 82 |

+

]

|

| 83 |

+

|

| 84 |

+

outputs = gr.Image(type="filepath", label="Segmentation")

|

| 85 |

+

|

| 86 |

+

examples = [[image_list[0], "deprem-ml/deprem_satellite_semantic_whu"],

|

| 87 |

+

[image_list[1], "deprem-ml/deprem_satellite_semantic_whu"],

|

| 88 |

+

[image_list[2], "deprem-ml/deprem_satellite_semantic_whu"],

|

| 89 |

+

[image_list[3], "deprem-ml/deprem_satellite_semantic_whu"]]

|

| 90 |

+

|

| 91 |

+

title = "Deprem ML - Segformer Semantic Segmentation"

|

| 92 |

+

|

| 93 |

+

demo_app = gr.Interface(

|

| 94 |

+

Segformer_Segmentation,

|

| 95 |

+

inputs,

|

| 96 |

+

outputs,

|

| 97 |

+

examples=examples,

|

| 98 |

+

title=title,

|

| 99 |

+

cache_examples=True

|

| 100 |

+

)

|

| 101 |

+

|

| 102 |

+

demo_app.launch(debug=True, enable_queue=True)

|

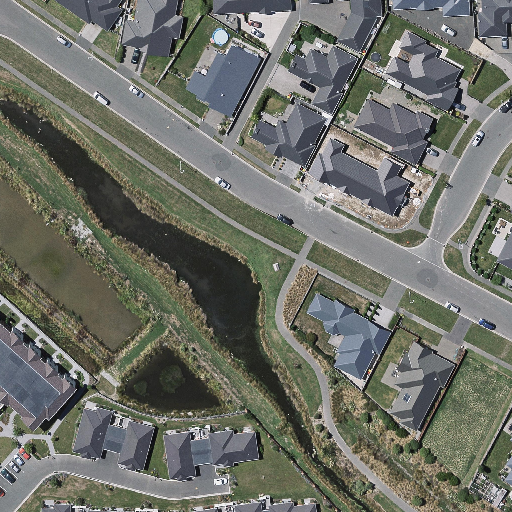

data/1.png

ADDED

|

data/2.png

ADDED

|

data/3.png

ADDED

|

data/4.png

ADDED

|

requirements.txt

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

gradio==3.18.0

|

| 2 |

+

matplotlib==3.6.2

|

| 3 |

+

numpy==1.24.2

|

| 4 |

+

Pillow==9.4.0

|

| 5 |

+

torch==1.12.1

|

| 6 |

+

transformers==4.26.0

|