Upload folder using huggingface_hub

Browse files- Spark-TTS-0.5B/.gitattributes +47 -0

- Spark-TTS-0.5B/BiCodec/config.yaml +60 -0

- Spark-TTS-0.5B/BiCodec/model.safetensors +3 -0

- Spark-TTS-0.5B/config.yaml +7 -0

- Spark-TTS-0.5B/wav2vec2-large-xlsr-53/README.md +29 -0

- Spark-TTS-0.5B/wav2vec2-large-xlsr-53/config.json +83 -0

- Spark-TTS-0.5B/wav2vec2-large-xlsr-53/preprocessor_config.json +9 -0

- Spark-TTS-0.5B/wav2vec2-large-xlsr-53/pytorch_model.bin +3 -0

Spark-TTS-0.5B/.gitattributes

ADDED

|

@@ -0,0 +1,47 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

LLM/tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

wav2vec2-large-xlsr-53/pytorch_model.bin filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

LLM/model.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

BiCodec/model.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

src/figures/infer_control.png filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

src/figures/infer_voice_cloning.png filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

src/logo/HKUST.jpg filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

src/logo/NPU.jpg filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

src/logo/SJU.jpg filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

src/logo/SparkTTS.png filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

src/logo/mobvoi.jpg filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

src/logo/mobvoi.png filter=lfs diff=lfs merge=lfs -text

|

Spark-TTS-0.5B/BiCodec/config.yaml

ADDED

|

@@ -0,0 +1,60 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

audio_tokenizer:

|

| 2 |

+

mel_params:

|

| 3 |

+

sample_rate: 16000

|

| 4 |

+

n_fft: 1024

|

| 5 |

+

win_length: 640

|

| 6 |

+

hop_length: 320

|

| 7 |

+

mel_fmin: 10

|

| 8 |

+

mel_fmax: null

|

| 9 |

+

num_mels: 128

|

| 10 |

+

|

| 11 |

+

encoder:

|

| 12 |

+

input_channels: 1024

|

| 13 |

+

vocos_dim: 384

|

| 14 |

+

vocos_intermediate_dim: 2048

|

| 15 |

+

vocos_num_layers: 12

|

| 16 |

+

out_channels: 1024

|

| 17 |

+

sample_ratios: [1,1]

|

| 18 |

+

|

| 19 |

+

decoder:

|

| 20 |

+

input_channel: 1024

|

| 21 |

+

channels: 1536

|

| 22 |

+

rates: [8, 5, 4, 2]

|

| 23 |

+

kernel_sizes: [16,11,8,4]

|

| 24 |

+

|

| 25 |

+

quantizer:

|

| 26 |

+

input_dim: 1024

|

| 27 |

+

codebook_size: 8192

|

| 28 |

+

codebook_dim: 8

|

| 29 |

+

commitment: 0.25

|

| 30 |

+

codebook_loss_weight: 2.0

|

| 31 |

+

use_l2_normlize: True

|

| 32 |

+

threshold_ema_dead_code: 0.2

|

| 33 |

+

|

| 34 |

+

speaker_encoder:

|

| 35 |

+

input_dim: 128

|

| 36 |

+

out_dim: 1024

|

| 37 |

+

latent_dim: 128

|

| 38 |

+

token_num: 32

|

| 39 |

+

fsq_levels: [4, 4, 4, 4, 4, 4]

|

| 40 |

+

fsq_num_quantizers: 1

|

| 41 |

+

|

| 42 |

+

prenet:

|

| 43 |

+

input_channels: 1024

|

| 44 |

+

vocos_dim: 384

|

| 45 |

+

vocos_intermediate_dim: 2048

|

| 46 |

+

vocos_num_layers: 12

|

| 47 |

+

out_channels: 1024

|

| 48 |

+

condition_dim: 1024

|

| 49 |

+

sample_ratios: [1,1]

|

| 50 |

+

use_tanh_at_final: False

|

| 51 |

+

|

| 52 |

+

postnet:

|

| 53 |

+

input_channels: 1024

|

| 54 |

+

vocos_dim: 384

|

| 55 |

+

vocos_intermediate_dim: 2048

|

| 56 |

+

vocos_num_layers: 6

|

| 57 |

+

out_channels: 1024

|

| 58 |

+

use_tanh_at_final: False

|

| 59 |

+

|

| 60 |

+

|

Spark-TTS-0.5B/BiCodec/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e9940cd48d4446e4340ced82d234bf5618350dd9f5db900ebe47a4fdb03867ec

|

| 3 |

+

size 625518756

|

Spark-TTS-0.5B/config.yaml

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

highpass_cutoff_freq: 40

|

| 2 |

+

sample_rate: 16000

|

| 3 |

+

segment_duration: 2.4 # (s)

|

| 4 |

+

max_val_duration: 12 # (s)

|

| 5 |

+

latent_hop_length: 320

|

| 6 |

+

ref_segment_duration: 6

|

| 7 |

+

volume_normalize: true

|

Spark-TTS-0.5B/wav2vec2-large-xlsr-53/README.md

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: multilingual

|

| 3 |

+

datasets:

|

| 4 |

+

- common_voice

|

| 5 |

+

tags:

|

| 6 |

+

- speech

|

| 7 |

+

license: apache-2.0

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# Wav2Vec2-XLSR-53

|

| 11 |

+

|

| 12 |

+

[Facebook's XLSR-Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/)

|

| 13 |

+

|

| 14 |

+

The base model pretrained on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. Note that this model should be fine-tuned on a downstream task, like Automatic Speech Recognition. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more information.

|

| 15 |

+

|

| 16 |

+

[Paper](https://arxiv.org/abs/2006.13979)

|

| 17 |

+

|

| 18 |

+

Authors: Alexis Conneau, Alexei Baevski, Ronan Collobert, Abdelrahman Mohamed, Michael Auli

|

| 19 |

+

|

| 20 |

+

**Abstract**

|

| 21 |

+

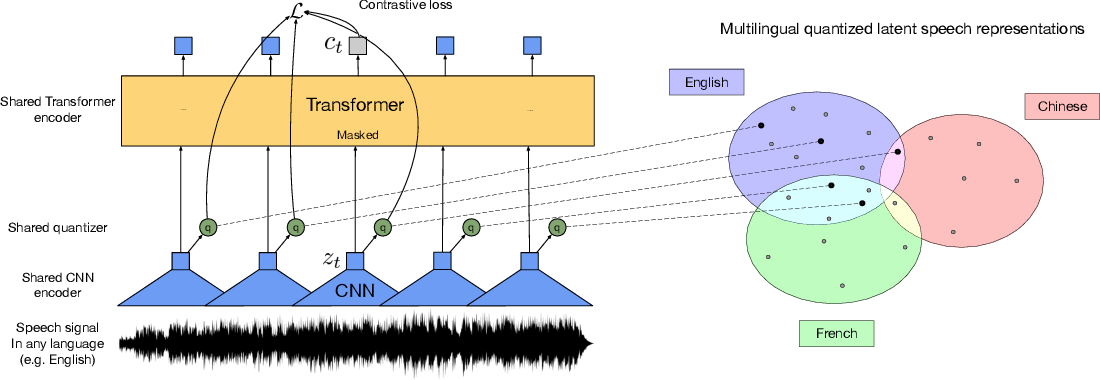

This paper presents XLSR which learns cross-lingual speech representations by pretraining a single model from the raw waveform of speech in multiple languages. We build on wav2vec 2.0 which is trained by solving a contrastive task over masked latent speech representations and jointly learns a quantization of the latents shared across languages. The resulting model is fine-tuned on labeled data and experiments show that cross-lingual pretraining significantly outperforms monolingual pretraining. On the CommonVoice benchmark, XLSR shows a relative phoneme error rate reduction of 72% compared to the best known results. On BABEL, our approach improves word error rate by 16% relative compared to a comparable system. Our approach enables a single multilingual speech recognition model which is competitive to strong individual models. Analysis shows that the latent discrete speech representations are shared across languages with increased sharing for related languages. We hope to catalyze research in low-resource speech understanding by releasing XLSR-53, a large model pretrained in 53 languages.

|

| 22 |

+

|

| 23 |

+

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

|

| 24 |

+

|

| 25 |

+

# Usage

|

| 26 |

+

|

| 27 |

+

See [this notebook](https://colab.research.google.com/github/patrickvonplaten/notebooks/blob/master/Fine_Tune_XLSR_Wav2Vec2_on_Turkish_ASR_with_%F0%9F%A4%97_Transformers.ipynb) for more information on how to fine-tune the model.

|

| 28 |

+

|

| 29 |

+

|

Spark-TTS-0.5B/wav2vec2-large-xlsr-53/config.json

ADDED

|

@@ -0,0 +1,83 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"activation_dropout": 0.0,

|

| 3 |

+

"apply_spec_augment": true,

|

| 4 |

+

"architectures": [

|

| 5 |

+

"Wav2Vec2ForPreTraining"

|

| 6 |

+

],

|

| 7 |

+

"attention_dropout": 0.1,

|

| 8 |

+

"bos_token_id": 1,

|

| 9 |

+

"codevector_dim": 768,

|

| 10 |

+

"contrastive_logits_temperature": 0.1,

|

| 11 |

+

"conv_bias": true,

|

| 12 |

+

"conv_dim": [

|

| 13 |

+

512,

|

| 14 |

+

512,

|

| 15 |

+

512,

|

| 16 |

+

512,

|

| 17 |

+

512,

|

| 18 |

+

512,

|

| 19 |

+

512

|

| 20 |

+

],

|

| 21 |

+

"conv_kernel": [

|

| 22 |

+

10,

|

| 23 |

+

3,

|

| 24 |

+

3,

|

| 25 |

+

3,

|

| 26 |

+

3,

|

| 27 |

+

2,

|

| 28 |

+

2

|

| 29 |

+

],

|

| 30 |

+

"conv_stride": [

|

| 31 |

+

5,

|

| 32 |

+

2,

|

| 33 |

+

2,

|

| 34 |

+

2,

|

| 35 |

+

2,

|

| 36 |

+

2,

|

| 37 |

+

2

|

| 38 |

+

],

|

| 39 |

+

"ctc_loss_reduction": "sum",

|

| 40 |

+

"ctc_zero_infinity": false,

|

| 41 |

+

"diversity_loss_weight": 0.1,

|

| 42 |

+

"do_stable_layer_norm": true,

|

| 43 |

+

"eos_token_id": 2,

|

| 44 |

+

"feat_extract_activation": "gelu",

|

| 45 |

+

"feat_extract_dropout": 0.0,

|

| 46 |

+

"feat_extract_norm": "layer",

|

| 47 |

+

"feat_proj_dropout": 0.1,

|

| 48 |

+

"feat_quantizer_dropout": 0.0,

|

| 49 |

+

"final_dropout": 0.0,

|

| 50 |

+

"gradient_checkpointing": false,

|

| 51 |

+

"hidden_act": "gelu",

|

| 52 |

+

"hidden_dropout": 0.1,

|

| 53 |

+

"hidden_size": 1024,

|

| 54 |

+

"initializer_range": 0.02,

|

| 55 |

+

"intermediate_size": 4096,

|

| 56 |

+

"layer_norm_eps": 1e-05,

|

| 57 |

+

"layerdrop": 0.1,

|

| 58 |

+

"mask_channel_length": 10,

|

| 59 |

+

"mask_channel_min_space": 1,

|

| 60 |

+

"mask_channel_other": 0.0,

|

| 61 |

+

"mask_channel_prob": 0.0,

|

| 62 |

+

"mask_channel_selection": "static",

|

| 63 |

+

"mask_feature_length": 10,

|

| 64 |

+

"mask_feature_prob": 0.0,

|

| 65 |

+

"mask_time_length": 10,

|

| 66 |

+

"mask_time_min_space": 1,

|

| 67 |

+

"mask_time_other": 0.0,

|

| 68 |

+

"mask_time_prob": 0.075,

|

| 69 |

+

"mask_time_selection": "static",

|

| 70 |

+

"model_type": "wav2vec2",

|

| 71 |

+

"num_attention_heads": 16,

|

| 72 |

+

"num_codevector_groups": 2,

|

| 73 |

+

"num_codevectors_per_group": 320,

|

| 74 |

+

"num_conv_pos_embedding_groups": 16,

|

| 75 |

+

"num_conv_pos_embeddings": 128,

|

| 76 |

+

"num_feat_extract_layers": 7,

|

| 77 |

+

"num_hidden_layers": 24,

|

| 78 |

+

"num_negatives": 100,

|

| 79 |

+

"pad_token_id": 0,

|

| 80 |

+

"proj_codevector_dim": 768,

|

| 81 |

+

"transformers_version": "4.7.0.dev0",

|

| 82 |

+

"vocab_size": 32

|

| 83 |

+

}

|

Spark-TTS-0.5B/wav2vec2-large-xlsr-53/preprocessor_config.json

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_normalize": true,

|

| 3 |

+

"feature_extractor_type": "Wav2Vec2FeatureExtractor",

|

| 4 |

+

"feature_size": 1,

|

| 5 |

+

"padding_side": "right",

|

| 6 |

+

"padding_value": 0,

|

| 7 |

+

"return_attention_mask": true,

|

| 8 |

+

"sampling_rate": 16000

|

| 9 |

+

}

|

Spark-TTS-0.5B/wav2vec2-large-xlsr-53/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:314340227371a608f71adcd5f0de5933824fe77e55822aa4b24dba9c1c364dcb

|

| 3 |

+

size 1269737156

|