Instructions to use BansheeTechnologies/HomeDock-OS-3B-Instruct with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use BansheeTechnologies/HomeDock-OS-3B-Instruct with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="BansheeTechnologies/HomeDock-OS-3B-Instruct") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)# Load model directly from transformers import AutoModel model = AutoModel.from_pretrained("BansheeTechnologies/HomeDock-OS-3B-Instruct", dtype="auto") - llama-cpp-python

How to use BansheeTechnologies/HomeDock-OS-3B-Instruct with llama-cpp-python:

# !pip install llama-cpp-python from llama_cpp import Llama llm = Llama.from_pretrained( repo_id="BansheeTechnologies/HomeDock-OS-3B-Instruct", filename="HomeDock-OS-3B-Instruct.Q4_K_M.gguf", )

llm.create_chat_completion( messages = [ { "role": "user", "content": "What is the capital of France?" } ] ) - Notebooks

- Google Colab

- Kaggle

- Local Apps

- llama.cpp

How to use BansheeTechnologies/HomeDock-OS-3B-Instruct with llama.cpp:

Install from brew

brew install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M # Run inference directly in the terminal: llama-cli -hf BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

Install from WinGet (Windows)

winget install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M # Run inference directly in the terminal: llama-cli -hf BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

Use pre-built binary

# Download pre-built binary from: # https://github.com/ggerganov/llama.cpp/releases # Start a local OpenAI-compatible server with a web UI: ./llama-server -hf BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M # Run inference directly in the terminal: ./llama-cli -hf BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

Build from source code

git clone https://github.com/ggerganov/llama.cpp.git cd llama.cpp cmake -B build cmake --build build -j --target llama-server llama-cli # Start a local OpenAI-compatible server with a web UI: ./build/bin/llama-server -hf BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M # Run inference directly in the terminal: ./build/bin/llama-cli -hf BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

Use Docker

docker model run hf.co/BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

- LM Studio

- Jan

- vLLM

How to use BansheeTechnologies/HomeDock-OS-3B-Instruct with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "BansheeTechnologies/HomeDock-OS-3B-Instruct" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "BansheeTechnologies/HomeDock-OS-3B-Instruct", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

- SGLang

How to use BansheeTechnologies/HomeDock-OS-3B-Instruct with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "BansheeTechnologies/HomeDock-OS-3B-Instruct" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "BansheeTechnologies/HomeDock-OS-3B-Instruct", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "BansheeTechnologies/HomeDock-OS-3B-Instruct" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "BansheeTechnologies/HomeDock-OS-3B-Instruct", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }' - Ollama

How to use BansheeTechnologies/HomeDock-OS-3B-Instruct with Ollama:

ollama run hf.co/BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

- Unsloth Studio new

How to use BansheeTechnologies/HomeDock-OS-3B-Instruct with Unsloth Studio:

Install Unsloth Studio (macOS, Linux, WSL)

curl -fsSL https://unsloth.ai/install.sh | sh # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for BansheeTechnologies/HomeDock-OS-3B-Instruct to start chatting

Install Unsloth Studio (Windows)

irm https://unsloth.ai/install.ps1 | iex # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for BansheeTechnologies/HomeDock-OS-3B-Instruct to start chatting

Using HuggingFace Spaces for Unsloth

# No setup required # Open https://huggingface.co/spaces/unsloth/studio in your browser # Search for BansheeTechnologies/HomeDock-OS-3B-Instruct to start chatting

- Pi new

How to use BansheeTechnologies/HomeDock-OS-3B-Instruct with Pi:

Start the llama.cpp server

# Install llama.cpp: brew install llama.cpp # Start a local OpenAI-compatible server: llama-server -hf BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

Configure the model in Pi

# Install Pi: npm install -g @mariozechner/pi-coding-agent # Add to ~/.pi/agent/models.json: { "providers": { "llama-cpp": { "baseUrl": "http://localhost:8080/v1", "api": "openai-completions", "apiKey": "none", "models": [ { "id": "BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M" } ] } } }Run Pi

# Start Pi in your project directory: pi

- Hermes Agent new

How to use BansheeTechnologies/HomeDock-OS-3B-Instruct with Hermes Agent:

Start the llama.cpp server

# Install llama.cpp: brew install llama.cpp # Start a local OpenAI-compatible server: llama-server -hf BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

Configure Hermes

# Install Hermes: curl -fsSL https://hermes-agent.nousresearch.com/install.sh | bash hermes setup # Point Hermes at the local server: hermes config set model.provider custom hermes config set model.base_url http://127.0.0.1:8080/v1 hermes config set model.default BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

Run Hermes

hermes

- Docker Model Runner

How to use BansheeTechnologies/HomeDock-OS-3B-Instruct with Docker Model Runner:

docker model run hf.co/BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

- Lemonade

How to use BansheeTechnologies/HomeDock-OS-3B-Instruct with Lemonade:

Pull the model

# Download Lemonade from https://lemonade-server.ai/ lemonade pull BansheeTechnologies/HomeDock-OS-3B-Instruct:Q4_K_M

Run and chat with the model

lemonade run user.HomeDock-OS-3B-Instruct-Q4_K_M

List all available models

lemonade list

HomeDock-OS-3B-Instruct

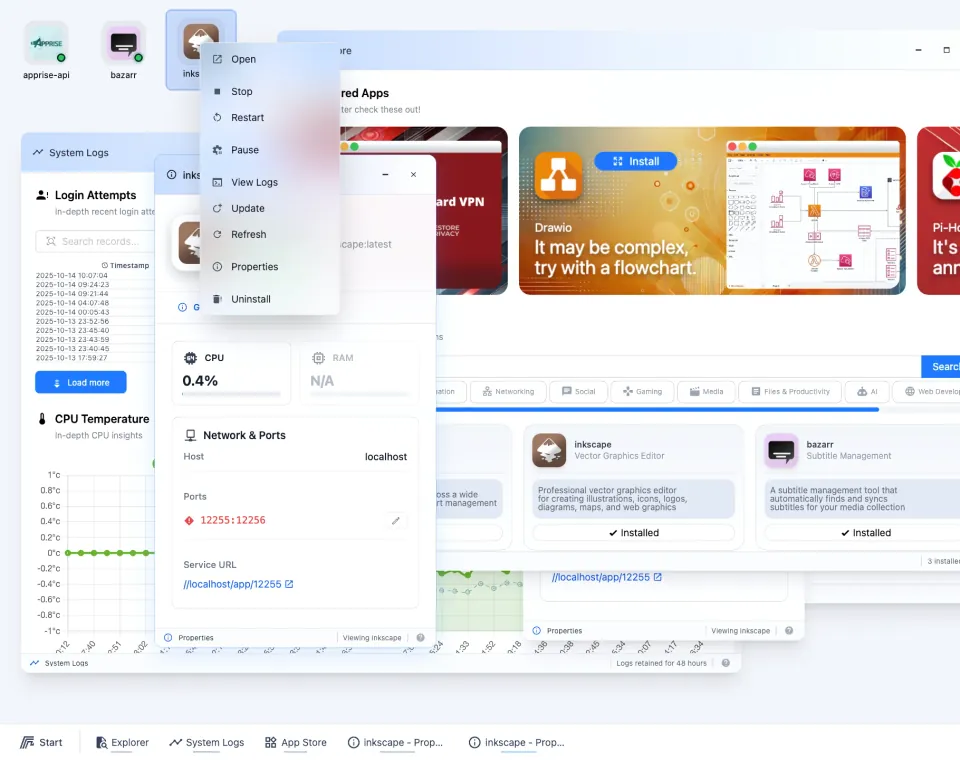

A fine-tuned version of Qwen 2.5 3B Instruct specialized in HomeDock OS documentation, features, for guidance and to make self-hosting easier.

Model Description

HomeDock-OS-3B-Instruct is trained on the complete HomeDock OS knowledge base:

- Documentation: Installation, configuration, SSL, troubleshooting

- 200+ Applications Features: Plex, Jellyfin, Nextcloud, Home Assistant, and more

- Blog Articles: Tutorials, comparisons, and best practices

- Enterprise Features: Data Space, Secure Tunnel, Audit Trail, and more

Key Features

- Answers questions about HomeDock OS features and configuration

- Provides guidance on self-hosted application setup

- Understands Docker containerization concepts

- Explains App Store applications and their use cases

Quantization

| File | Size | Description |

|---|---|---|

HomeDock-OS-3B-Instruct.Q4_K_M.gguf |

~1.8 GB | Recommended, best balance |

HomeDock-OS-3B-Instruct.Q8_0.gguf |

~3.2 GB | Higher quality |

Usage

Ollama

- Create a

Modelfile:

FROM ./HomeDock-OS-3B-Instruct.Q4_K_M.gguf

TEMPLATE """<|im_start|>system

{{ .System }}<|im_end|>

<|im_start|>user

{{ .Prompt }}<|im_end|>

<|im_start|>assistant

"""

SYSTEM """You are an expert assistant for HomeDock OS documentation. Provide clear, accurate, and helpful information about HomeDock OS features, configuration, and usage."""

PARAMETER stop "<|im_end|>"

PARAMETER stop "<|im_start|>"

PARAMETER temperature 0.7

- Create and run:

ollama create homedock -f Modelfile

ollama run homedock "What is HomeDock OS?"

llama.cpp

./llama-cli -m HomeDock-OS-3B-Instruct.Q4_K_M.gguf \

-p "<|im_start|>system\nYou are an expert assistant for HomeDock OS documentation.<|im_end|>\n<|im_start|>user\nWhat is Drop Zone?<|im_end|>\n<|im_start|>assistant\n" \

-n 256

LM Studio

- Download the

.gguffile - Import into LM Studio

- Use the ChatML preset or configure manually

Python (llama-cpp-python)

from llama_cpp import Llama

llm = Llama(model_path="HomeDock-OS-3B-Instruct.Q4_K_M.gguf", n_ctx=2048)

response = llm.create_chat_completion(

messages=[

{"role": "system", "content": "You are an expert assistant for HomeDock OS documentation."},

{"role": "user", "content": "How do I install Plex on HomeDock OS?"}

]

)

print(response["choices"][0]["message"]["content"])

Example Conversations

User: What is HomeDock OS?

Assistant: HomeDock OS is a complete home cloud operating system that simplifies self-hosting. It provides a desktop-like interface in your browser with features like the App Store (200+ pre-configured applications), Drop Zone (encrypted file storage), Control Hub (container management), and automatic SSL configuration. It runs on Linux, Windows, macOS, and as a Docker container.

User: How do I configure SSL?

Assistant: HomeDock OS supports automatic SSL configuration. Navigate to Settings > SSL/HTTPS, enter your domain name, and HomeDock OS will automatically obtain and configure Let's Encrypt certificates. Applications installed through the App Store inherit SSL configuration automatically.

User: What is the difference between Plex and Jellyfin?

Assistant: Both are media server solutions available in the HomeDock OS App Store. Plex offers a polished interface with premium features like hardware transcoding and mobile sync (requires Plex Pass). Jellyfin is fully open-source and free, with no premium tier required. For most self-hosters, Jellyfin provides excellent functionality without subscription costs.

Training Details

| Parameter | Value |

|---|---|

| Base Model | Qwen/Qwen2.5-3B-Instruct |

| Method | LoRA (r=16, alpha=32) |

| Epochs | 3 |

| Learning Rate | 2e-4 |

| Sequence Length | 2048 |

| Training Examples | 788 |

| Total Words | ~99,000 |

Training Data

The model was trained on the complete HomeDock OS documentation including:

- Official documentation (MDX)

- Application descriptions (200+ apps)

- Blog posts and tutorials

- Enterprise feature guides

Limitations

- Knowledge is specific to HomeDock OS; may not generalize well to other platforms

- Training data cutoff corresponds to the documentation version at training time

- Best suited for English queries

- May occasionally generate plausible-sounding but incorrect information

License

This model is released under the Apache 2.0 License, same as the base Qwen 2.5 model.

Links

Citation

@misc{homedock-os-3b-instruct,

author = {Banshee Technologies S.L.},

title = {HomeDock-OS-3B-Instruct: A Fine-tuned LLM for HomeDock OS Documentation},

year = {2025},

publisher = {Hugging Face},

url = {https://huggingface.co/banshee/HomeDock-OS-3B-Instruct}

}

Built with HomeDock OS

homedock.cloud

- Downloads last month

- 4

4-bit