html_url stringlengths 48 51 | title stringlengths 5 268 | comments stringlengths 63 51.8k | body stringlengths 0 36.2k ⌀ | comment_length int64 16 1.52k | text stringlengths 164 54.1k | embeddings list |

|---|---|---|---|---|---|---|

https://github.com/huggingface/datasets/issues/3735 | Performance of `datasets` at scale | The most surprising part to me is the saving time. Wondering if it could be due to compression (`ParquetWriter` uses SNAPPY compression by default; it can be turned off with `to_parquet(..., compression=None)`). | # Performance of `datasets` at 1TB scale

## What is this?

During the processing of a large dataset I monitored the performance of the `datasets` library to see if there are any bottlenecks. The insights of this analysis could guide the decision making to improve the performance of the library.

## Dataset

The da... | 32 | Performance of `datasets` at scale

# Performance of `datasets` at 1TB scale

## What is this?

During the processing of a large dataset I monitored the performance of the `datasets` library to see if there are any bottlenecks. The insights of this analysis could guide the decision making to improve the performance ... | [

-0.4659071565,

-0.0334515944,

-0.1042619422,

0.1815135777,

0.0901725069,

0.0411183946,

0.1751108021,

0.3004227877,

0.1423734725,

-0.0201912615,

0.0761042461,

0.1921412945,

-0.0361628421,

0.5749624968,

-0.0226483662,

-0.0665424839,

-0.0241282955,

0.0048626824,

-0.217030704,

-0.0... |

https://github.com/huggingface/datasets/issues/3735 | Performance of `datasets` at scale | +1 to what @mariosasko mentioned. Also, @lvwerra did you parallelize `to_parquet` using similar approach in #2747? (we used multiprocessing at the shard level). I'm working on a similar PR to add multi_proc in `to_parquet` which might give you further speed up.

Stas benchmarked his approach and mine in this [gist](ht... | # Performance of `datasets` at 1TB scale

## What is this?

During the processing of a large dataset I monitored the performance of the `datasets` library to see if there are any bottlenecks. The insights of this analysis could guide the decision making to improve the performance of the library.

## Dataset

The da... | 63 | Performance of `datasets` at scale

# Performance of `datasets` at 1TB scale

## What is this?

During the processing of a large dataset I monitored the performance of the `datasets` library to see if there are any bottlenecks. The insights of this analysis could guide the decision making to improve the performance ... | [

-0.4659071565,

-0.0334515944,

-0.1042619422,

0.1815135777,

0.0901725069,

0.0411183946,

0.1751108021,

0.3004227877,

0.1423734725,

-0.0201912615,

0.0761042461,

0.1921412945,

-0.0361628421,

0.5749624968,

-0.0226483662,

-0.0665424839,

-0.0241282955,

0.0048626824,

-0.217030704,

-0.0... |

https://github.com/huggingface/datasets/issues/3735 | Performance of `datasets` at scale | @mariosasko I did not turn it off but I can try the next time - I have to run the pipeline again, anyway.

@bhavitvyamalik Yes, I also sharded the dataset and used multiprocessing to save each shard. I'll have a closer look at your approach, too. | # Performance of `datasets` at 1TB scale

## What is this?

During the processing of a large dataset I monitored the performance of the `datasets` library to see if there are any bottlenecks. The insights of this analysis could guide the decision making to improve the performance of the library.

## Dataset

The da... | 46 | Performance of `datasets` at scale

# Performance of `datasets` at 1TB scale

## What is this?

During the processing of a large dataset I monitored the performance of the `datasets` library to see if there are any bottlenecks. The insights of this analysis could guide the decision making to improve the performance ... | [

-0.4659071565,

-0.0334515944,

-0.1042619422,

0.1815135777,

0.0901725069,

0.0411183946,

0.1751108021,

0.3004227877,

0.1423734725,

-0.0201912615,

0.0761042461,

0.1921412945,

-0.0361628421,

0.5749624968,

-0.0226483662,

-0.0665424839,

-0.0241282955,

0.0048626824,

-0.217030704,

-0.0... |

https://github.com/huggingface/datasets/issues/3730 | Checksum Error when loading multi-news dataset | Thanks for reporting @byw2.

We are fixing it.

In the meantime, you can load the dataset by passing `ignore_verifications=True`:

```python

dataset = load_dataset("multi_news", ignore_verifications=True) | ## Describe the bug

When using the load_dataset function from datasets module to load the Multi-News dataset, does not load the dataset but throws Checksum Error instead.

## Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("multi_news")

```

## Expected results

... | 24 | Checksum Error when loading multi-news dataset

## Describe the bug

When using the load_dataset function from datasets module to load the Multi-News dataset, does not load the dataset but throws Checksum Error instead.

## Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_data... | [

-0.2059844732,

0.119747147,

-0.0531000271,

0.3288653195,

0.1786068529,

0.0489469506,

0.3487609625,

0.2491254061,

0.2209578454,

0.0042153173,

-0.020120943,

0.1327264905,

0.0797101408,

0.2458918542,

-0.1772808731,

0.0411296003,

0.2067429572,

-0.1955725104,

0.1274431944,

-0.031286... |

https://github.com/huggingface/datasets/issues/3729 | Wrong number of examples when loading a text dataset | Hi @kg-nlp, thanks for reporting.

That is weird... I guess we would need some sample data file where this behavior appears to reproduce the bug for further investigation... | ## Describe the bug

when I use load_dataset to read a txt file I find that the number of the samples is incorrect

## Steps to reproduce the bug

```

fr = open('train.txt','r',encoding='utf-8').readlines()

print(len(fr)) # 1199637

datasets = load_dataset('text', data_files={'train': ['train.txt']}, streaming... | 28 | Wrong number of examples when loading a text dataset

## Describe the bug

when I use load_dataset to read a txt file I find that the number of the samples is incorrect

## Steps to reproduce the bug

```

fr = open('train.txt','r',encoding='utf-8').readlines()

print(len(fr)) # 1199637

datasets = load_dataset... | [

-0.0605260655,

-0.2417583913,

-0.0344216228,

0.4838173389,

0.065916352,

0.0262386296,

0.5079740286,

0.0472354554,

-0.023038568,

0.1874682903,

0.444887042,

0.1894766986,

0.0757768676,

0.0255927108,

0.3641293645,

-0.2086357623,

0.2814244628,

-0.0695903748,

-0.1799233258,

0.001248... |

https://github.com/huggingface/datasets/issues/3729 | Wrong number of examples when loading a text dataset | ok, I found the reason why that two results are not same.

there is /u2029 in the text, the datasets will split sentence according to the /u2029,but when I use open function will not do that .

so I want to know which function shell do that

thanks | ## Describe the bug

when I use load_dataset to read a txt file I find that the number of the samples is incorrect

## Steps to reproduce the bug

```

fr = open('train.txt','r',encoding='utf-8').readlines()

print(len(fr)) # 1199637

datasets = load_dataset('text', data_files={'train': ['train.txt']}, streaming... | 48 | Wrong number of examples when loading a text dataset

## Describe the bug

when I use load_dataset to read a txt file I find that the number of the samples is incorrect

## Steps to reproduce the bug

```

fr = open('train.txt','r',encoding='utf-8').readlines()

print(len(fr)) # 1199637

datasets = load_dataset... | [

-0.1179775819,

-0.3109657764,

-0.0979499519,

0.530828476,

-0.21858266,

-0.2745325267,

0.4456655979,

-0.0981146768,

-0.0977490395,

0.2998822629,

0.1997930557,

0.1997048706,

0.2252021432,

0.0837190151,

0.4043927789,

-0.2532298863,

0.3243586123,

0.1103495955,

-0.3028640747,

-0.301... |

https://github.com/huggingface/datasets/issues/3720 | Builder Configuration Update Required on Common Voice Dataset | Hi @aasem, thanks for reporting.

Please note that currently Commom Voice is hosted on our Hub as a community dataset by the Mozilla Foundation. See all Common Voice versions here: https://huggingface.co/mozilla-foundation

Maybe we should add an explaining note in our "legacy" Common Voice canonical script? What d... | Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found. I checked the source file here for the languages support:

ht... | 52 | Builder Configuration Update Required on Common Voice Dataset

Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found.... | [

-0.2856746018,

0.1739170104,

0.0023721412,

-0.1521494836,

0.1797928214,

0.1795553714,

0.0361903384,

0.1813979447,

-0.4638518989,

0.1273631901,

0.0928716883,

-0.051489599,

-0.0403752439,

-0.2595183849,

0.2246842086,

-0.0798487514,

0.0472483151,

0.0587806664,

0.0920704827,

-0.162... |

https://github.com/huggingface/datasets/issues/3720 | Builder Configuration Update Required on Common Voice Dataset | Thank you, @albertvillanova, for the quick response. I am not sure about the exact flow but I guess adding the following lines under the `_Languages` dictionary definition in [common_voice.py](https://github.com/huggingface/datasets/blob/master/datasets/common_voice/common_voice.py) might resolve the issue. I guess the... | Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found. I checked the source file here for the languages support:

ht... | 66 | Builder Configuration Update Required on Common Voice Dataset

Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found.... | [

-0.2908358276,

0.0055588363,

0.0204208232,

-0.0029063479,

0.2136821002,

0.1312917918,

0.0075951642,

0.2225896865,

-0.3800753057,

0.2097702622,

0.121313192,

-0.0567619801,

-0.1200531498,

-0.0659274682,

0.211689651,

-0.2299364358,

0.0533253737,

0.1275778413,

0.1963362843,

-0.1596... |

https://github.com/huggingface/datasets/issues/3720 | Builder Configuration Update Required on Common Voice Dataset | @aasem for compliance reasons, we are no longer updating the `common_voice.py` script.

We agreed with Mozilla Foundation to use their community datasets instead, which will ask you to accept their terms of use:

```

You need to share your contact information to access this dataset.

This repository is publicly ac... | Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found. I checked the source file here for the languages support:

ht... | 192 | Builder Configuration Update Required on Common Voice Dataset

Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found.... | [

-0.2701786458,

0.1846490055,

0.0216178335,

-0.046714481,

0.2218092084,

0.1341910362,

0.0782260299,

0.2611940801,

-0.3444994986,

0.2042435408,

0.0835882351,

0.0007256507,

-0.0550360493,

-0.2131644338,

0.1940619797,

-0.1723414809,

-0.0371397771,

0.0623359494,

0.2901751399,

-0.179... |

https://github.com/huggingface/datasets/issues/3720 | Builder Configuration Update Required on Common Voice Dataset | @albertvillanova

>Maybe we should add an explaining note in our "legacy" Common Voice canonical script?

Yes, I agree we should have a deprecation notice in the canonical script to redirect users to the new script. | Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found. I checked the source file here for the languages support:

ht... | 35 | Builder Configuration Update Required on Common Voice Dataset

Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found.... | [

-0.3522530496,

0.103318274,

0.0201227553,

-0.1326442063,

0.1710538417,

0.1720189899,

0.063082017,

0.2731883228,

-0.3063312769,

0.1558266878,

0.1680767089,

-0.0426007435,

-0.1602720022,

-0.0915840715,

0.1674870849,

-0.1476795226,

0.1083246022,

0.1433466375,

0.1850374937,

-0.1774... |

https://github.com/huggingface/datasets/issues/3720 | Builder Configuration Update Required on Common Voice Dataset | @albertvillanova,

I now get the following error after downloading my access token from the huggingface and passing it to `load_dataset` call:

`AttributeError: 'DownloadManager' object has no attribute 'download_config'`

Any quick pointer on how it might be resolved? | Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found. I checked the source file here for the languages support:

ht... | 37 | Builder Configuration Update Required on Common Voice Dataset

Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found.... | [

-0.3459948599,

0.1322572082,

0.0547214374,

0.1285535246,

0.2149555683,

0.1292428076,

-0.0509486012,

0.1270668805,

-0.2447929829,

0.239728421,

0.0264988188,

-0.1509607136,

-0.1511382908,

-0.0213900078,

0.2012620568,

-0.1719041914,

0.0843130574,

0.1090747863,

0.2482061386,

-0.146... |

https://github.com/huggingface/datasets/issues/3720 | Builder Configuration Update Required on Common Voice Dataset | @aasem What version of `datasets` are you using? We renamed that attribute from `_download_config` to `download_conig` fairly recently, so updating to the newest version should resolve the issue:

```

pip install -U datasets

``` | Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found. I checked the source file here for the languages support:

ht... | 34 | Builder Configuration Update Required on Common Voice Dataset

Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found.... | [

-0.4302419722,

0.0144608077,

-0.0066324342,

-0.0191776697,

0.2999699414,

0.1326845139,

-0.0474833548,

0.2533238828,

-0.2436418384,

0.2547218204,

0.1182378829,

0.0407666378,

-0.1010221541,

-0.0704348087,

0.085390307,

-0.2976481318,

0.0260496214,

0.1262313724,

0.197205618,

-0.183... |

https://github.com/huggingface/datasets/issues/3717 | wrong condition in `Features ClassLabel encode_example` | Hi @Tudyx,

Please note that in Python, the boolean NOT operator (`not`) has lower precedence than comparison operators (`<=`, `<`), thus the expression you mention is equivalent to:

```python

not (-1 <= example_data < self.num_classes)

```

Also note that as expected, the exception is raised if:

- `example_d... | ## Describe the bug

The `encode_example` function in *features.py* seems to have a wrong condition.

```python

if not -1 <= example_data < self.num_classes:

raise ValueError(f"Class label {example_data:d} greater than configured num_classes {self.num_classes}")

```

## Expected results

The `not - 1` co... | 71 | wrong condition in `Features ClassLabel encode_example`

## Describe the bug

The `encode_example` function in *features.py* seems to have a wrong condition.

```python

if not -1 <= example_data < self.num_classes:

raise ValueError(f"Class label {example_data:d} greater than configured num_classes {self.num_... | [

0.1391999722,

-0.1168350875,

-0.0464337245,

0.2833454311,

0.2257674038,

-0.0928371921,

0.2275467068,

0.2446250916,

-0.1854133755,

0.0163679607,

0.3163581491,

0.3322761357,

-0.2180692405,

0.4177816808,

-0.1956312656,

0.0690538064,

0.1742625237,

0.2923476398,

0.3052038252,

-0.146... |

https://github.com/huggingface/datasets/issues/3708 | Loading JSON gets stuck with many workers/threads | Hi ! Note that it does `block_size *= 2` until `block_size > len(batch)`, so it doesn't loop indefinitely. What do you mean by "get stuck indefinitely" then ? Is this the actual call to `paj.read_json` that hangs ?

> increasing the `chunksize` argument decreases the chance of getting stuck

Could you share the val... | ## Describe the bug

Loading a JSON dataset with `load_dataset` can get stuck when running on a machine with many CPUs. This is especially an issue when loading a large dataset on a large machine.

## Steps to reproduce the bug

I originally created the following script to reproduce the issue:

```python

from dat... | 78 | Loading JSON gets stuck with many workers/threads

## Describe the bug

Loading a JSON dataset with `load_dataset` can get stuck when running on a machine with many CPUs. This is especially an issue when loading a large dataset on a large machine.

## Steps to reproduce the bug

I originally created the following... | [

-0.2772898972,

-0.1832047999,

-0.1026515365,

0.1906440854,

0.131996572,

-0.0690689906,

0.4268065095,

0.2522391081,

0.291331321,

0.1814338863,

0.1807477623,

0.557315588,

0.1101846546,

-0.2725525796,

-0.0052468558,

0.0646460876,

-0.0230275784,

-0.1319034845,

-0.0031667606,

0.1560... |

https://github.com/huggingface/datasets/issues/3708 | Loading JSON gets stuck with many workers/threads | To clarify, I don't think it loops indefinitely but the `paj.read_json` gets stuck after the first try. That's why I think it could be an issue with a lock somewhere.

Using `load_dataset(..., chunksize=40<<20)` worked without errors. | ## Describe the bug

Loading a JSON dataset with `load_dataset` can get stuck when running on a machine with many CPUs. This is especially an issue when loading a large dataset on a large machine.

## Steps to reproduce the bug

I originally created the following script to reproduce the issue:

```python

from dat... | 36 | Loading JSON gets stuck with many workers/threads

## Describe the bug

Loading a JSON dataset with `load_dataset` can get stuck when running on a machine with many CPUs. This is especially an issue when loading a large dataset on a large machine.

## Steps to reproduce the bug

I originally created the following... | [

-0.2772898972,

-0.1832047999,

-0.1026515365,

0.1906440854,

0.131996572,

-0.0690689906,

0.4268065095,

0.2522391081,

0.291331321,

0.1814338863,

0.1807477623,

0.557315588,

0.1101846546,

-0.2725525796,

-0.0052468558,

0.0646460876,

-0.0230275784,

-0.1319034845,

-0.0031667606,

0.1560... |

https://github.com/huggingface/datasets/issues/3707 | `.select`: unexpected behavior with `indices` | Hi! Currently, we compute the final index as `index % len(dset)`. I agree this behavior is somewhat unexpected and that it would be more appropriate to raise an error instead (this is what `df.iloc` in Pandas does, for instance).

@albertvillanova @lhoestq wdyt? | ## Describe the bug

The `.select` method will not throw when sending `indices` bigger than the dataset length; `indices` will be wrapped instead. This behavior is not documented anywhere, and is not intuitive.

## Steps to reproduce the bug

```python

from datasets import Dataset

ds = Dataset.from_dict({"text": [... | 42 | `.select`: unexpected behavior with `indices`

## Describe the bug

The `.select` method will not throw when sending `indices` bigger than the dataset length; `indices` will be wrapped instead. This behavior is not documented anywhere, and is not intuitive.

## Steps to reproduce the bug

```python

from datasets i... | [

-0.1406795532,

-0.2200402766,

-0.0164005645,

0.4212484062,

0.0805128962,

-0.0664442927,

0.3318194151,

0.1800907254,

0.2260111421,

0.352301985,

0.0550057329,

0.5035140514,

0.2490186989,

0.0551462993,

-0.362167567,

-0.0069085811,

-0.0928678289,

-0.0007304215,

-0.1078659818,

-0.22... |

https://github.com/huggingface/datasets/issues/3707 | `.select`: unexpected behavior with `indices` | I agree. I think `index % len(dset)` was used to support negative indices.

I think this needs to be fixed in `datasets.formatting.formatting._check_valid_index_key` if I'm not mistaken | ## Describe the bug

The `.select` method will not throw when sending `indices` bigger than the dataset length; `indices` will be wrapped instead. This behavior is not documented anywhere, and is not intuitive.

## Steps to reproduce the bug

```python

from datasets import Dataset

ds = Dataset.from_dict({"text": [... | 26 | `.select`: unexpected behavior with `indices`

## Describe the bug

The `.select` method will not throw when sending `indices` bigger than the dataset length; `indices` will be wrapped instead. This behavior is not documented anywhere, and is not intuitive.

## Steps to reproduce the bug

```python

from datasets i... | [

-0.1581116766,

-0.25665465,

-0.0318490341,

0.3439429104,

0.0620099641,

-0.1153479517,

0.2147520185,

0.1504350156,

0.0474207774,

0.1787699163,

0.0234423447,

0.4659059048,

0.1104499027,

0.2063288987,

-0.2355961353,

-0.0393909998,

-0.0478052236,

0.1092761159,

-0.0718755051,

-0.206... |

https://github.com/huggingface/datasets/issues/3706 | Unable to load dataset 'big_patent' | Hi @ankitk2109,

Have you tried passing the split name with the keyword `split=`? See e.g. an example in our Quick Start docs: https://huggingface.co/docs/datasets/quickstart.html#load-the-dataset-and-model

```python

ds = load_dataset("big_patent", "d", split="validation") | ## Describe the bug

Unable to load the "big_patent" dataset

## Steps to reproduce the bug

```python

load_dataset('big_patent', 'd', 'validation')

```

## Expected results

Download big_patents' validation split from the 'd' subset

## Getting an error saying:

{FileNotFoundError}Local file ..\huggingface\dat... | 29 | Unable to load dataset 'big_patent'

## Describe the bug

Unable to load the "big_patent" dataset

## Steps to reproduce the bug

```python

load_dataset('big_patent', 'd', 'validation')

```

## Expected results

Download big_patents' validation split from the 'd' subset

## Getting an error saying:

{FileNotFo... | [

-0.2909577489,

-0.4436421692,

-0.0362716764,

0.4938029945,

0.3471500874,

0.1680756658,

0.1101178601,

0.2599283755,

0.123266384,

0.0131376898,

-0.3273753524,

0.0412844308,

-0.0739786997,

0.4042496979,

0.2557625175,

-0.3181647062,

0.0480562225,

0.0218535829,

-0.0038350688,

0.0419... |

https://github.com/huggingface/datasets/issues/3706 | Unable to load dataset 'big_patent' | Hi @albertvillanova,

Thanks for your response.

Yes, I tried the `split='validation'` as well. But getting the same issue. | ## Describe the bug

Unable to load the "big_patent" dataset

## Steps to reproduce the bug

```python

load_dataset('big_patent', 'd', 'validation')

```

## Expected results

Download big_patents' validation split from the 'd' subset

## Getting an error saying:

{FileNotFoundError}Local file ..\huggingface\dat... | 18 | Unable to load dataset 'big_patent'

## Describe the bug

Unable to load the "big_patent" dataset

## Steps to reproduce the bug

```python

load_dataset('big_patent', 'd', 'validation')

```

## Expected results

Download big_patents' validation split from the 'd' subset

## Getting an error saying:

{FileNotFo... | [

-0.2848466933,

-0.0606216937,

-0.0639390498,

0.575901866,

0.2154278904,

0.1228088588,

0.2310488224,

0.4681129456,

0.1615882516,

0.0266631283,

-0.2342445105,

0.0454667844,

-0.0157030988,

0.1677100509,

0.2608820796,

-0.2648475766,

0.0229797028,

0.0599010997,

0.001682676,

-0.01707... |

https://github.com/huggingface/datasets/issues/3706 | Unable to load dataset 'big_patent' | I'm sorry, but I can't reproduce your problem:

```python

In [5]: ds = load_dataset("big_patent", "d", split="validation")

Downloading and preparing dataset big_patent/d (download: 6.01 GiB, generated: 169.61 MiB, post-processed: Unknown size, total: 6.17 GiB) to .../.cache/big_patent/d/1.0.0/bdefa7c0b39fba8bba1c6331... | ## Describe the bug

Unable to load the "big_patent" dataset

## Steps to reproduce the bug

```python

load_dataset('big_patent', 'd', 'validation')

```

## Expected results

Download big_patents' validation split from the 'd' subset

## Getting an error saying:

{FileNotFoundError}Local file ..\huggingface\dat... | 72 | Unable to load dataset 'big_patent'

## Describe the bug

Unable to load the "big_patent" dataset

## Steps to reproduce the bug

```python

load_dataset('big_patent', 'd', 'validation')

```

## Expected results

Download big_patents' validation split from the 'd' subset

## Getting an error saying:

{FileNotFo... | [

-0.3682878315,

-0.0730192587,

-0.0981643498,

0.4866812825,

0.2612976134,

0.1087043956,

0.1902627051,

0.4533425868,

0.1328501701,

0.0507911704,

-0.1489647776,

0.0408353321,

-0.0469963737,

0.1007054374,

0.2328069359,

-0.209725529,

-0.0155005781,

0.02328844,

0.0051829605,

0.006029... |

https://github.com/huggingface/datasets/issues/3706 | Unable to load dataset 'big_patent' | Maybe you had a connection issue while downloading the file and this was corrupted?

Our cache system uses the file you downloaded first time.

If so, you could try forcing redownload of the file with:

```python

ds = load_dataset("big_patent", "d", split="validation", download_mode="force_redownload") | ## Describe the bug

Unable to load the "big_patent" dataset

## Steps to reproduce the bug

```python

load_dataset('big_patent', 'd', 'validation')

```

## Expected results

Download big_patents' validation split from the 'd' subset

## Getting an error saying:

{FileNotFoundError}Local file ..\huggingface\dat... | 42 | Unable to load dataset 'big_patent'

## Describe the bug

Unable to load the "big_patent" dataset

## Steps to reproduce the bug

```python

load_dataset('big_patent', 'd', 'validation')

```

## Expected results

Download big_patents' validation split from the 'd' subset

## Getting an error saying:

{FileNotFo... | [

-0.3243025839,

-0.0634645075,

-0.0910803452,

0.4624112546,

0.2718907893,

0.1327952147,

0.1778512746,

0.3625104427,

0.2114627212,

0.013645092,

-0.1294031888,

0.0310820155,

-0.0108539835,

0.0591872036,

0.2250686884,

-0.1928537786,

-0.0490768813,

0.0843679681,

0.0163288545,

0.0109... |

https://github.com/huggingface/datasets/issues/3706 | Unable to load dataset 'big_patent' | I am able to download the dataset with ``` download_mode="force_redownload"```. As you mentioned it was an issue with the cached version which was failed earlier due to a network issue. I am closing the issue now, once again thank you. | ## Describe the bug

Unable to load the "big_patent" dataset

## Steps to reproduce the bug

```python

load_dataset('big_patent', 'd', 'validation')

```

## Expected results

Download big_patents' validation split from the 'd' subset

## Getting an error saying:

{FileNotFoundError}Local file ..\huggingface\dat... | 40 | Unable to load dataset 'big_patent'

## Describe the bug

Unable to load the "big_patent" dataset

## Steps to reproduce the bug

```python

load_dataset('big_patent', 'd', 'validation')

```

## Expected results

Download big_patents' validation split from the 'd' subset

## Getting an error saying:

{FileNotFo... | [

-0.2900757492,

-0.0335867479,

-0.0612993911,

0.5121245384,

0.2357622385,

0.1199185178,

0.2026650012,

0.4182065725,

0.1493887901,

-0.0536810383,

-0.1301836371,

-0.002370433,

-0.0229688324,

0.0843351781,

0.3025264144,

-0.2059617341,

0.029935997,

0.047047846,

-0.026889408,

-0.0148... |

https://github.com/huggingface/datasets/issues/3704 | OSCAR-2109 datasets are misaligned and truncated | Hi @adrianeboyd, thanks for reporting.

There is indeed a bug in that community dataset:

Line:

```python

metadata_and_text_files = list(zip(metadata_files, text_files))

```

should be replaced with

```python

metadata_and_text_files = list(zip(sorted(metadata_files), sorted(text_files)))

```

I am going to c... | ## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few examples, although I'm not sure how deterministic the par... | 51 | OSCAR-2109 datasets are misaligned and truncated

## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few exampl... | [

-0.3790073395,

0.3509358466,

0.0164461136,

0.5407814384,

-0.1386849582,

0.0147254271,

0.2296267599,

0.3700642288,

-0.3047654331,

-0.0990702137,

0.0361928083,

-0.0641971603,

0.1635019481,

-0.0632026196,

0.0641614646,

-0.2708623111,

0.1433134079,

0.0770913064,

0.0587200187,

-0.09... |

https://github.com/huggingface/datasets/issues/3704 | OSCAR-2109 datasets are misaligned and truncated | That fix is part of it, but it's clearly not the only issue.

I also already contacted the OSCAR creators, but I reported it here because it looked like huggingface members were the main authors in the git history. Is there a better place to have reported this? | ## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few examples, although I'm not sure how deterministic the par... | 48 | OSCAR-2109 datasets are misaligned and truncated

## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few exampl... | [

-0.3790073395,

0.3509358466,

0.0164461136,

0.5407814384,

-0.1386849582,

0.0147254271,

0.2296267599,

0.3700642288,

-0.3047654331,

-0.0990702137,

0.0361928083,

-0.0641971603,

0.1635019481,

-0.0632026196,

0.0641614646,

-0.2708623111,

0.1433134079,

0.0770913064,

0.0587200187,

-0.09... |

https://github.com/huggingface/datasets/issues/3704 | OSCAR-2109 datasets are misaligned and truncated | Hello,

We've had an issue that could be linked to this one here: https://github.com/oscar-corpus/corpus/issues/15.

I have been spot checking the source (`.txt`/`.jsonl`) files for a while, and have not found issues, especially in the start/end of corpora (but I conceed that more integration testing would be neces... | ## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few examples, although I'm not sure how deterministic the par... | 118 | OSCAR-2109 datasets are misaligned and truncated

## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few exampl... | [

-0.3790073395,

0.3509358466,

0.0164461136,

0.5407814384,

-0.1386849582,

0.0147254271,

0.2296267599,

0.3700642288,

-0.3047654331,

-0.0990702137,

0.0361928083,

-0.0641971603,

0.1635019481,

-0.0632026196,

0.0641614646,

-0.2708623111,

0.1433134079,

0.0770913064,

0.0587200187,

-0.09... |

https://github.com/huggingface/datasets/issues/3704 | OSCAR-2109 datasets are misaligned and truncated | I'm happy to move the discussion to the other repo!

Merely sorting the files only **maybe** fixes the processing of the first part. If the first part contains non-unix newlines, it will still be misaligned/truncated, and all the following parts will be truncated with incorrect text offsets and metadata due the offse... | ## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few examples, although I'm not sure how deterministic the par... | 55 | OSCAR-2109 datasets are misaligned and truncated

## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few exampl... | [

-0.3790073395,

0.3509358466,

0.0164461136,

0.5407814384,

-0.1386849582,

0.0147254271,

0.2296267599,

0.3700642288,

-0.3047654331,

-0.0990702137,

0.0361928083,

-0.0641971603,

0.1635019481,

-0.0632026196,

0.0641614646,

-0.2708623111,

0.1433134079,

0.0770913064,

0.0587200187,

-0.09... |

https://github.com/huggingface/datasets/issues/3704 | OSCAR-2109 datasets are misaligned and truncated | Hi @Uinelj, This is a total noobs question but how can I integrate that bugfix into my code? I reinstalled the datasets library this time from source. Should that have fixed the issue? I am still facing the misalignment issue. Do I need to download the dataset from scratch? | ## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few examples, although I'm not sure how deterministic the par... | 49 | OSCAR-2109 datasets are misaligned and truncated

## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few exampl... | [

-0.3790073395,

0.3509358466,

0.0164461136,

0.5407814384,

-0.1386849582,

0.0147254271,

0.2296267599,

0.3700642288,

-0.3047654331,

-0.0990702137,

0.0361928083,

-0.0641971603,

0.1635019481,

-0.0632026196,

0.0641614646,

-0.2708623111,

0.1433134079,

0.0770913064,

0.0587200187,

-0.09... |

https://github.com/huggingface/datasets/issues/3704 | OSCAR-2109 datasets are misaligned and truncated | Sorry @norakassner for the late reply.

There are indeed several issues creating the misalignment, as @adrianeboyd cleverly pointed out:

- https://huggingface.co/datasets/oscar-corpus/OSCAR-2109/commit/3cd7e95aa1799b73c5ea8afc3989635f3e19b86b fixed one of them

- but there are still others to be fixed | ## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few examples, although I'm not sure how deterministic the par... | 34 | OSCAR-2109 datasets are misaligned and truncated

## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few exampl... | [

-0.3790073395,

0.3509358466,

0.0164461136,

0.5407814384,

-0.1386849582,

0.0147254271,

0.2296267599,

0.3700642288,

-0.3047654331,

-0.0990702137,

0.0361928083,

-0.0641971603,

0.1635019481,

-0.0632026196,

0.0641614646,

-0.2708623111,

0.1433134079,

0.0770913064,

0.0587200187,

-0.09... |

https://github.com/huggingface/datasets/issues/3704 | OSCAR-2109 datasets are misaligned and truncated | Normally, the issues should be fixed now:

- Fix offset initialization for each file: https://huggingface.co/datasets/oscar-corpus/OSCAR-2109/commit/1ad9b7bfe00798a9258a923b887bb1c8d732b833

- Disable default universal newline support: https://huggingface.co/datasets/oscar-corpus/OSCAR-2109/commit/0c2f307d3167f03632f50... | ## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few examples, although I'm not sure how deterministic the par... | 35 | OSCAR-2109 datasets are misaligned and truncated

## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few exampl... | [

-0.3790073395,

0.3509358466,

0.0164461136,

0.5407814384,

-0.1386849582,

0.0147254271,

0.2296267599,

0.3700642288,

-0.3047654331,

-0.0990702137,

0.0361928083,

-0.0641971603,

0.1635019481,

-0.0632026196,

0.0641614646,

-0.2708623111,

0.1433134079,

0.0770913064,

0.0587200187,

-0.09... |

https://github.com/huggingface/datasets/issues/3704 | OSCAR-2109 datasets are misaligned and truncated | Thanks for the updates!

The purist in me would still like to have the rstrip not strip additional characters from the original text (unicode whitespace mainly in practice, I think), but the differences are extremely small in practice and it doesn't actually matter for my current task:

```python

text = "".join([t... | ## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few examples, although I'm not sure how deterministic the par... | 56 | OSCAR-2109 datasets are misaligned and truncated

## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few exampl... | [

-0.3790073395,

0.3509358466,

0.0164461136,

0.5407814384,

-0.1386849582,

0.0147254271,

0.2296267599,

0.3700642288,

-0.3047654331,

-0.0990702137,

0.0361928083,

-0.0641971603,

0.1635019481,

-0.0632026196,

0.0641614646,

-0.2708623111,

0.1433134079,

0.0770913064,

0.0587200187,

-0.09... |

https://github.com/huggingface/datasets/issues/3703 | ImportError: To be able to use this metric, you need to install the following dependencies['seqeval'] using 'pip install seqeval' for instance' | Hi! Some of our metrics require additional dependencies to work. In your case, simply installing the `seqeval` package with `pip install seqeval` should resolve the issue. | hi :

I want to use the seqeval indicator because of direct load_ When metric ('seqeval '), it will prompt that the network connection fails. So I downloaded the seqeval Py to load locally. Loading code: metric = load_ metric(path='mymetric/seqeval/seqeval.py')

But tips:

Traceback (most recent call last):

File... | 26 | ImportError: To be able to use this metric, you need to install the following dependencies['seqeval'] using 'pip install seqeval' for instance'

hi :

I want to use the seqeval indicator because of direct load_ When metric ('seqeval '), it will prompt that the network connection fails. So I downloaded the seqeval Py t... | [

-0.1979477853,

-0.0261322893,

-0.0460704267,

0.048714228,

0.2330710441,

-0.0966798216,

0.3034621775,

-0.0408191793,

0.1501882523,

0.2724968195,

-0.0265025795,

0.4663179517,

-0.117960006,

-0.0697474778,

0.0039565205,

0.055459097,

-0.221604079,

0.2347217053,

0.0374869257,

-0.0331... |

https://github.com/huggingface/datasets/issues/3703 | ImportError: To be able to use this metric, you need to install the following dependencies['seqeval'] using 'pip install seqeval' for instance' | > Hi! Some of our metrics require additional dependencies to work. In your case, simply installing the `seqeval` package with `pip install seqeval` should resolve the issue.

I installed seqeval, but still reported the same error. That's too bad.

| hi :

I want to use the seqeval indicator because of direct load_ When metric ('seqeval '), it will prompt that the network connection fails. So I downloaded the seqeval Py to load locally. Loading code: metric = load_ metric(path='mymetric/seqeval/seqeval.py')

But tips:

Traceback (most recent call last):

File... | 39 | ImportError: To be able to use this metric, you need to install the following dependencies['seqeval'] using 'pip install seqeval' for instance'

hi :

I want to use the seqeval indicator because of direct load_ When metric ('seqeval '), it will prompt that the network connection fails. So I downloaded the seqeval Py t... | [

-0.2025142908,

-0.0279516838,

-0.046689935,

0.0476437584,

0.229351297,

-0.1002444327,

0.3064735532,

-0.0407144725,

0.1468651444,

0.276491046,

-0.0252320133,

0.4661178589,

-0.1189252585,

-0.0669726729,

-0.0000810218,

0.0616327114,

-0.2240398824,

0.2324857861,

0.0310468934,

-0.02... |

https://github.com/huggingface/datasets/issues/3703 | ImportError: To be able to use this metric, you need to install the following dependencies['seqeval'] using 'pip install seqeval' for instance' | > > Hi! Some of our metrics require additional dependencies to work. In your case, simply installing the `seqeval` package with `pip install seqeval` should resolve the issue.

> > I installed seqeval, but still reported the same error. That's too bad.

Same issue here. What should I do to fix this error? Please help... | hi :

I want to use the seqeval indicator because of direct load_ When metric ('seqeval '), it will prompt that the network connection fails. So I downloaded the seqeval Py to load locally. Loading code: metric = load_ metric(path='mymetric/seqeval/seqeval.py')

But tips:

Traceback (most recent call last):

File... | 57 | ImportError: To be able to use this metric, you need to install the following dependencies['seqeval'] using 'pip install seqeval' for instance'

hi :

I want to use the seqeval indicator because of direct load_ When metric ('seqeval '), it will prompt that the network connection fails. So I downloaded the seqeval Py t... | [

-0.2018062174,

-0.027352469,

-0.0475676991,

0.0484961011,

0.2304400206,

-0.0999850258,

0.3054769635,

-0.0413617231,

0.1490331292,

0.2779763043,

-0.0248936471,

0.4655256569,

-0.1186925694,

-0.0632172748,

-0.0003957157,

0.0598772839,

-0.225105837,

0.2342484146,

0.0314481072,

-0.0... |

https://github.com/huggingface/datasets/issues/3703 | ImportError: To be able to use this metric, you need to install the following dependencies['seqeval'] using 'pip install seqeval' for instance' | I tried to install **seqeval** package through anaconda instead of pip:

`conda install -c conda-forge seqeval`

It worked for me! | hi :

I want to use the seqeval indicator because of direct load_ When metric ('seqeval '), it will prompt that the network connection fails. So I downloaded the seqeval Py to load locally. Loading code: metric = load_ metric(path='mymetric/seqeval/seqeval.py')

But tips:

Traceback (most recent call last):

File... | 20 | ImportError: To be able to use this metric, you need to install the following dependencies['seqeval'] using 'pip install seqeval' for instance'

hi :

I want to use the seqeval indicator because of direct load_ When metric ('seqeval '), it will prompt that the network connection fails. So I downloaded the seqeval Py t... | [

-0.2087471485,

0.0372918658,

-0.0465946794,

0.0723289177,

0.2412151247,

-0.0558816716,

0.2564868331,

-0.0605246983,

0.1419683248,

0.2192429155,

-0.0591691583,

0.4464016259,

-0.0956755057,

-0.0059291921,

-0.0359144136,

0.1483167112,

-0.2069616914,

0.2406514287,

-0.0371867791,

0.... |

https://github.com/huggingface/datasets/issues/3700 | Unable to load a dataset | Hi! `load_dataset` is intended to be used to load a canonical dataset (`wikipedia`), a packaged dataset (`csv`, `json`, ...) or a dataset hosted on the Hub. For local datasets saved with `save_to_disk("path/to/dataset")`, use `load_from_disk("path/to/dataset")`. | ## Describe the bug

Unable to load a dataset from Huggingface that I have just saved.

## Steps to reproduce the bug

On Google colab

`! pip install datasets `

`from datasets import load_dataset`

`my_path = "wiki_dataset"`

`dataset = load_dataset('wikipedia', "20200501.fr")`

`dataset.save_to_disk(my_path)`

`... | 34 | Unable to load a dataset

## Describe the bug

Unable to load a dataset from Huggingface that I have just saved.

## Steps to reproduce the bug

On Google colab

`! pip install datasets `

`from datasets import load_dataset`

`my_path = "wiki_dataset"`

`dataset = load_dataset('wikipedia', "20200501.fr")`

`datase... | [

-0.2790259123,

-0.2967556417,

0.0411759429,

0.6267769337,

0.3320978582,

0.1287171245,

0.2145056725,

-0.0036905431,

0.3158269823,

0.100925535,

-0.2397874296,

0.3568312526,

-0.0890317336,

0.3122532368,

0.0596994087,

-0.2918172181,

0.1328980625,

-0.1646209657,

-0.1491223723,

0.021... |

https://github.com/huggingface/datasets/issues/3688 | Pyarrow version error | Hi @Zaker237, thanks for reporting.

This is weird: the error you get is only thrown if the installed pyarrow version is less than 3.0.0.

Could you please check that you install pyarrow in the same Python virtual environment where you installed datasets?

From the Python command line (or terminal) where you get ... | ## Describe the bug

I installed datasets(version 1.17.0, 1.18.0, 1.18.3) but i'm right now nor able to import it because of pyarrow. when i try to import it, i get the following error:

`To use datasets, the module pyarrow>=3.0.0 is required, and the current version of pyarrow doesn't match this condition`.

i tryed w... | 64 | Pyarrow version error

## Describe the bug

I installed datasets(version 1.17.0, 1.18.0, 1.18.3) but i'm right now nor able to import it because of pyarrow. when i try to import it, i get the following error:

`To use datasets, the module pyarrow>=3.0.0 is required, and the current version of pyarrow doesn't match thi... | [

-0.4256339073,

0.2237600088,

-0.0069542294,

0.1207932457,

0.0590526015,

0.0466515869,

0.1877537966,

0.3738027811,

-0.2632450461,

-0.0977328941,

0.1039726883,

0.30188784,

-0.1692364514,

-0.0959769338,

-0.0230374504,

-0.1642787755,

0.3303413987,

0.1971314251,

-0.0938647017,

0.050... |

https://github.com/huggingface/datasets/issues/3688 | Pyarrow version error | hi @albertvillanova i try yesterday to create a new python environement with python 7 and try it on the environement and it worked. so i think that the error was not the package but may be jupyter notebook on conda. still yet i'm not yet sure but it worked in an environment created with venv | ## Describe the bug

I installed datasets(version 1.17.0, 1.18.0, 1.18.3) but i'm right now nor able to import it because of pyarrow. when i try to import it, i get the following error:

`To use datasets, the module pyarrow>=3.0.0 is required, and the current version of pyarrow doesn't match this condition`.

i tryed w... | 55 | Pyarrow version error

## Describe the bug

I installed datasets(version 1.17.0, 1.18.0, 1.18.3) but i'm right now nor able to import it because of pyarrow. when i try to import it, i get the following error:

`To use datasets, the module pyarrow>=3.0.0 is required, and the current version of pyarrow doesn't match thi... | [

-0.4256339073,

0.2237600088,

-0.0069542294,

0.1207932457,

0.0590526015,

0.0466515869,

0.1877537966,

0.3738027811,

-0.2632450461,

-0.0977328941,

0.1039726883,

0.30188784,

-0.1692364514,

-0.0959769338,

-0.0230374504,

-0.1642787755,

0.3303413987,

0.1971314251,

-0.0938647017,

0.050... |

https://github.com/huggingface/datasets/issues/3688 | Pyarrow version error | OK, thanks @Zaker237 for your feedback.

I close this issue then. Please, feel free to reopen it if the problem arises again. | ## Describe the bug

I installed datasets(version 1.17.0, 1.18.0, 1.18.3) but i'm right now nor able to import it because of pyarrow. when i try to import it, i get the following error:

`To use datasets, the module pyarrow>=3.0.0 is required, and the current version of pyarrow doesn't match this condition`.

i tryed w... | 22 | Pyarrow version error

## Describe the bug

I installed datasets(version 1.17.0, 1.18.0, 1.18.3) but i'm right now nor able to import it because of pyarrow. when i try to import it, i get the following error:

`To use datasets, the module pyarrow>=3.0.0 is required, and the current version of pyarrow doesn't match thi... | [

-0.4256339073,

0.2237600088,

-0.0069542294,

0.1207932457,

0.0590526015,

0.0466515869,

0.1877537966,

0.3738027811,

-0.2632450461,

-0.0977328941,

0.1039726883,

0.30188784,

-0.1692364514,

-0.0959769338,

-0.0230374504,

-0.1642787755,

0.3303413987,

0.1971314251,

-0.0938647017,

0.050... |

https://github.com/huggingface/datasets/issues/3687 | Can't get the text data when calling to_tf_dataset | You are correct that `to_tf_dataset` only handles numerical columns right now, yes, though this is a limitation we might remove in future! The main reason we do this is that our models mostly do not include the tokenizer as a model layer, because it's very difficult to compile some of them in TF. So the "normal" Huggin... | I am working with the SST2 dataset, and am using TensorFlow 2.5

I'd like to convert it to a `tf.data.Dataset` by calling the `to_tf_dataset` method.

The following snippet is what I am using to achieve this:

```

from datasets import load_dataset

from transformers import DefaultDataCollator

data_collator = Defa... | 96 | Can't get the text data when calling to_tf_dataset

I am working with the SST2 dataset, and am using TensorFlow 2.5

I'd like to convert it to a `tf.data.Dataset` by calling the `to_tf_dataset` method.

The following snippet is what I am using to achieve this:

```

from datasets import load_dataset

from transforme... | [

0.306876719,

-0.2420510054,

0.0682803616,

0.3844340146,

0.311304301,

0.1206924245,

0.3427685201,

0.4319320321,

-0.1418879032,

0.1407022178,

-0.0737772956,

-0.0379524305,

0.037617296,

0.3645371497,

0.1628638953,

-0.2193426192,

0.0983592644,

0.1495360136,

-0.3627763391,

-0.218728... |

https://github.com/huggingface/datasets/issues/3687 | Can't get the text data when calling to_tf_dataset | Thanks for the quick follow-up to my issue.

For my use-case, instead of the built-in tokenizers I wanted to use the `TextVectorization` layer to map from strings to integers. To achieve this, I came up with the following solution:

```

from datasets import load_dataset

from transformers import DefaultDataCollato... | I am working with the SST2 dataset, and am using TensorFlow 2.5

I'd like to convert it to a `tf.data.Dataset` by calling the `to_tf_dataset` method.

The following snippet is what I am using to achieve this:

```

from datasets import load_dataset

from transformers import DefaultDataCollator

data_collator = Defa... | 212 | Can't get the text data when calling to_tf_dataset

I am working with the SST2 dataset, and am using TensorFlow 2.5

I'd like to convert it to a `tf.data.Dataset` by calling the `to_tf_dataset` method.

The following snippet is what I am using to achieve this:

```

from datasets import load_dataset

from transforme... | [

0.306876719,

-0.2420510054,

0.0682803616,

0.3844340146,

0.311304301,

0.1206924245,

0.3427685201,

0.4319320321,

-0.1418879032,

0.1407022178,

-0.0737772956,

-0.0379524305,

0.037617296,

0.3645371497,

0.1628638953,

-0.2193426192,

0.0983592644,

0.1495360136,

-0.3627763391,

-0.218728... |

https://github.com/huggingface/datasets/issues/3687 | Can't get the text data when calling to_tf_dataset | > For the future, however, it'd be more convenient to get the string data, since I am also inspecting the dataset (longest sentence, shortest sentence), which is more challenging when working with integer or float.

Yes, I agree, so let's keep this issue open. | I am working with the SST2 dataset, and am using TensorFlow 2.5

I'd like to convert it to a `tf.data.Dataset` by calling the `to_tf_dataset` method.

The following snippet is what I am using to achieve this:

```

from datasets import load_dataset

from transformers import DefaultDataCollator

data_collator = Defa... | 44 | Can't get the text data when calling to_tf_dataset

I am working with the SST2 dataset, and am using TensorFlow 2.5

I'd like to convert it to a `tf.data.Dataset` by calling the `to_tf_dataset` method.

The following snippet is what I am using to achieve this:

```

from datasets import load_dataset

from transforme... | [

0.306876719,

-0.2420510054,

0.0682803616,

0.3844340146,

0.311304301,

0.1206924245,

0.3427685201,

0.4319320321,

-0.1418879032,

0.1407022178,

-0.0737772956,

-0.0379524305,

0.037617296,

0.3645371497,

0.1628638953,

-0.2193426192,

0.0983592644,

0.1495360136,

-0.3627763391,

-0.218728... |

https://github.com/huggingface/datasets/issues/3686 | `Translation` features cannot be `flatten`ed | Thanks for reporting, @SBrandeis! Some additional feature types that don't behave as expected when flattened: `Audio`, `Image` and `TranslationVariableLanguages` | ## Describe the bug

(`Dataset.flatten`)[https://github.com/huggingface/datasets/blob/master/src/datasets/arrow_dataset.py#L1265] fails for columns with feature (`Translation`)[https://github.com/huggingface/datasets/blob/3edbeb0ec6519b79f1119adc251a1a6b379a2c12/src/datasets/features/translation.py#L8]

## Steps to... | 19 | `Translation` features cannot be `flatten`ed

## Describe the bug

(`Dataset.flatten`)[https://github.com/huggingface/datasets/blob/master/src/datasets/arrow_dataset.py#L1265] fails for columns with feature (`Translation`)[https://github.com/huggingface/datasets/blob/3edbeb0ec6519b79f1119adc251a1a6b379a2c12/src/data... | [

0.0999352336,

-0.5199523568,

0.0124358097,

0.3275805712,

0.3463025689,

0.0602360927,

0.3220860362,

0.1855656654,

0.0887600854,

0.1518424451,

0.0338772871,

0.6146896482,

0.2447858155,

0.2951707542,

-0.2488523573,

-0.3565292656,

0.170593679,

0.0839366689,

-0.1575884372,

-0.081798... |

https://github.com/huggingface/datasets/issues/3679 | Download datasets from a private hub | Hi ! For information one can set the environment variable `HF_ENDPOINT` (default is `https://huggingface.co`) if they want to use a private hub.

We may need to coordinate with the other libraries to have a consistent way of changing the hub endpoint | In the context of a private hub deployment, customers would like to use load_dataset() to load datasets from their hub, not from the public hub. This doesn't seem to be configurable at the moment and it would be nice to add this feature.

The obvious workaround is to clone the repo first and then load it from local s... | 41 | Download datasets from a private hub

In the context of a private hub deployment, customers would like to use load_dataset() to load datasets from their hub, not from the public hub. This doesn't seem to be configurable at the moment and it would be nice to add this feature.

The obvious workaround is to clone the r... | [

-0.4606108665,

0.0771518275,

0.0306206644,

0.1708162427,

-0.211316064,

-0.1057054624,

0.5121506453,

0.2544353306,

0.4819358885,

0.2293823361,

-0.5677485466,

0.197627902,

0.2814869881,

0.2804977596,

0.2473968565,

0.0667531788,

0.0141825834,

0.3287018239,

-0.157313481,

-0.2259977... |

https://github.com/huggingface/datasets/issues/3677 | Discovery cannot be streamed anymore | Seems like a regression from https://github.com/huggingface/datasets/pull/2843

Or maybe it's an issue with the hosting. I don't think so, though, because https://www.dropbox.com/s/aox84z90nyyuikz/discovery.zip seems to work as expected

| ## Describe the bug

A clear and concise description of what the bug is.

## Steps to reproduce the bug

```python

from datasets import load_dataset

iterable_dataset = load_dataset("discovery", name="discovery", split="train", streaming=True)

list(iterable_dataset.take(1))

```

## Expected results

The first ... | 26 | Discovery cannot be streamed anymore

## Describe the bug

A clear and concise description of what the bug is.

## Steps to reproduce the bug

```python

from datasets import load_dataset

iterable_dataset = load_dataset("discovery", name="discovery", split="train", streaming=True)

list(iterable_dataset.take(1))

`... | [

-0.3655185997,

-0.0733559355,

-0.032282766,

-0.0026658794,

0.150663197,

-0.1250083745,

0.1500283629,

0.4487424493,

-0.0735848546,

0.1144562662,

-0.2661015987,

0.3279671073,

-0.2113558352,

0.1654202789,

0.2201966941,

-0.1466775239,

-0.0193212759,

0.0676078498,

-0.0802748948,

-0.... |

https://github.com/huggingface/datasets/issues/3677 | Discovery cannot be streamed anymore | Hi @severo, thanks for reporting.

Some servers do not support HTTP range requests, and those are required to stream some file formats (like ZIP in this case).

Let me try to propose a workaround. | ## Describe the bug

A clear and concise description of what the bug is.

## Steps to reproduce the bug

```python

from datasets import load_dataset

iterable_dataset = load_dataset("discovery", name="discovery", split="train", streaming=True)

list(iterable_dataset.take(1))

```

## Expected results

The first ... | 34 | Discovery cannot be streamed anymore

## Describe the bug

A clear and concise description of what the bug is.

## Steps to reproduce the bug

```python

from datasets import load_dataset

iterable_dataset = load_dataset("discovery", name="discovery", split="train", streaming=True)

list(iterable_dataset.take(1))

`... | [

-0.3655185997,

-0.0733559355,

-0.032282766,

-0.0026658794,

0.150663197,

-0.1250083745,

0.1500283629,

0.4487424493,

-0.0735848546,

0.1144562662,

-0.2661015987,

0.3279671073,

-0.2113558352,

0.1654202789,

0.2201966941,

-0.1466775239,

-0.0193212759,

0.0676078498,

-0.0802748948,

-0.... |

https://github.com/huggingface/datasets/issues/3676 | `None` replaced by `[]` after first batch in map | It looks like this is because of this behavior in pyarrow:

```python

import pyarrow as pa

arr = pa.array([None, [0]])

reconstructed_arr = pa.ListArray.from_arrays(arr.offsets, arr.values)

print(reconstructed_arr.to_pylist())

# [[], [0]]

```

It seems that `arr.offsets` can reconstruct the array properly, but... | Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# b

# 0 [None, [0]]

# 1 [[], [0]]

# ... | 89 | `None` replaced by `[]` after first batch in map

Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# ... | [

-0.1136860177,

-0.191415295,

-0.0299211461,

0.0782203823,

0.4104258418,

-0.0416076481,

0.6156228781,

0.0721306652,

-0.0982016549,

0.1066264659,

-0.1036111191,

0.3490240872,

0.1736710072,

-0.4175100029,

-0.1059341058,

0.028131295,

0.1095845029,

0.4044575393,

-0.1162986234,

-0.06... |

https://github.com/huggingface/datasets/issues/3676 | `None` replaced by `[]` after first batch in map | The offsets don't have nulls because they don't include the validity bitmap from `arr.buffers()[0]`, which is used to say which values are null and which values are non-null.

Though the validity bitmap also seems to be wrong:

```python

bin(int(arr.buffers()[0].hex(), 16))

# '0b10'

# it should be 0b110 - 1 corres... | Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# b

# 0 [None, [0]]

# 1 [[], [0]]

# ... | 158 | `None` replaced by `[]` after first batch in map

Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# ... | [

-0.0358240791,

-0.2432300597,

-0.0416246466,

0.1726666093,

0.3844224215,

0.0432761833,

0.6972999573,

0.2239293009,

-0.0032909082,

0.144280538,

-0.0846433192,

0.3390791714,

0.0880538225,

-0.2586378157,

-0.1205720678,

-0.0456771702,

0.1046745926,

0.4155332446,

-0.1235405356,

-0.0... |

https://github.com/huggingface/datasets/issues/3676 | `None` replaced by `[]` after first batch in map | FYI the behavior is the same with:

- `datasets` version: 1.18.3

- Platform: Linux-5.8.0-50-generic-x86_64-with-debian-bullseye-sid

- Python version: 3.7.11

- PyArrow version: 6.0.1

but not with:

- `datasets` version: 1.8.0

- Platform: Linux-4.18.0-305.40.2.el8_4.x86_64-x86_64-with-redhat-8.4-Ootpa

- Python ... | Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# b

# 0 [None, [0]]

# 1 [[], [0]]

# ... | 57 | `None` replaced by `[]` after first batch in map

Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# ... | [

-0.120345071,

-0.4828740358,

-0.0233135875,

0.090897873,

0.4305691123,

0.0681472272,

0.6334287524,

0.1448312998,

0.2020837963,

0.1019381881,

-0.0855532065,

0.3341479897,

0.1260546744,

-0.1297750026,

-0.2391028702,

-0.0439484753,

0.1119943112,

0.2894803882,

-0.2681235969,

-0.047... |

https://github.com/huggingface/datasets/issues/3676 | `None` replaced by `[]` after first batch in map | Thanks for the insights @PaulLerner !

I found a way to workaround this issue for the code example presented in this issue.

Note that empty lists will still appear when you explicitly `cast` a list of lists that contain None values like [None, [0]] to a new feature type (e.g. to change the integer precision). In t... | Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# b

# 0 [None, [0]]

# 1 [[], [0]]

# ... | 87 | `None` replaced by `[]` after first batch in map

Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# ... | [

-0.0742934793,

-0.4457454085,

-0.0595099702,

0.0777366906,

0.5046238899,

0.0152352257,

0.6655239463,

0.2754406631,

0.278647393,

0.2214797139,

-0.0979031622,

0.2897973359,

0.0528755337,

0.0477773137,

-0.3361816406,

-0.1497146189,

0.0821734816,

0.3947313726,

-0.247402519,

-0.1306... |

https://github.com/huggingface/datasets/issues/3676 | `None` replaced by `[]` after first batch in map | Hi! I feel like I’m missing something in your answer, *what* is the workaround? Is it fixed in some `datasets` version? | Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# b

# 0 [None, [0]]

# 1 [[], [0]]

# ... | 21 | `None` replaced by `[]` after first batch in map

Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# ... | [

-0.1706128418,

-0.456341058,

-0.0308786687,

0.0773481429,

0.3964784145,

0.0354282483,

0.6240733266,

0.0938024968,

0.2293993235,

0.1052416265,

-0.02331887,

0.3047322631,

0.1261317134,

-0.0278188307,

-0.2588295341,

-0.0395659097,

0.1031301022,

0.330183208,

-0.2813706994,

-0.02351... |

https://github.com/huggingface/datasets/issues/3676 | `None` replaced by `[]` after first batch in map | `pa.ListArray.from_arrays` returns empty lists instead of None values. The workaround I added inside `datasets` simply consists in not using `pa.ListArray.from_arrays` :)

Once this PR [here ](https://github.com/huggingface/datasets/pull/4282)is merged, we'll release a new version of `datasets` that currectly returns... | Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# b

# 0 [None, [0]]

# 1 [[], [0]]

# ... | 47 | `None` replaced by `[]` after first batch in map

Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# ... | [

-0.1458847523,

-0.4942373335,

-0.0464127921,

0.1250201464,

0.454267323,

0.0150745008,

0.5315796137,

0.1924663633,

0.2851714194,

0.1692066044,

-0.1701225042,

0.3427030444,

0.0828172192,

-0.0368684493,

-0.2374550849,

-0.1015476733,

0.0718248561,

0.4054085612,

-0.3310920596,

-0.08... |

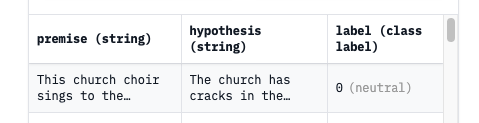

https://github.com/huggingface/datasets/issues/3673 | `load_dataset("snli")` is different from dataset viewer | Yes, we decided to replace the encoded label with the corresponding label when possible in the dataset viewer. But

1. maybe it's the wrong default

2. we could find a way to show both (with a switch, or showing both ie. `0 (neutral)`).

| ## Describe the bug

The dataset that is downloaded from the Hub via `load_dataset("snli")` is different from what is available in the dataset viewer. In the viewer the labels are not encoded (i.e., "neutral", "entailment", "contradiction"), while the downloaded dataset shows the encoded labels (i.e., 0, 1, 2).

Is t... | 43 | `load_dataset("snli")` is different from dataset viewer

## Describe the bug

The dataset that is downloaded from the Hub via `load_dataset("snli")` is different from what is available in the dataset viewer. In the viewer the labels are not encoded (i.e., "neutral", "entailment", "contradiction"), while the downloaded... | [

-0.039305117,

-0.1823725253,

-0.0017421179,

0.4216586053,

0.0142072439,

-0.0808521807,

0.5498264432,

0.0203979071,

0.2802698314,

0.2657594085,

-0.4218340218,

0.5688483119,

0.1886245906,

0.1806402951,

-0.0699716955,

0.1307980418,

0.302164346,

0.3575282693,

0.1494957656,

-0.34412... |

https://github.com/huggingface/datasets/issues/3673 | `load_dataset("snli")` is different from dataset viewer | Hi @severo,

Thanks for clarifying.

I think this default is a bit counterintuitive for the user. However, this is a personal opinion that might not be general. I think it is nice to have the actual (non-encoded) labels in the viewer. On the other hand, it would be nice to match what the user sees with what they g... | ## Describe the bug

The dataset that is downloaded from the Hub via `load_dataset("snli")` is different from what is available in the dataset viewer. In the viewer the labels are not encoded (i.e., "neutral", "entailment", "contradiction"), while the downloaded dataset shows the encoded labels (i.e., 0, 1, 2).

Is t... | 103 | `load_dataset("snli")` is different from dataset viewer

## Describe the bug

The dataset that is downloaded from the Hub via `load_dataset("snli")` is different from what is available in the dataset viewer. In the viewer the labels are not encoded (i.e., "neutral", "entailment", "contradiction"), while the downloaded... | [

-0.1473938376,

-0.2301128209,

0.0229258053,

0.3064574599,

0.105483681,

-0.079607524,

0.6571475267,

0.0317922123,

0.2773874998,

0.3631367683,

-0.294644922,

0.5051845908,