Model Card

[📜 H2OVL-Mississippi Paper] [🤗 HF Demo] [🚀 Quick Start]

The H2OVL-Mississippi-2B is a high-performing, general-purpose vision-language model developed by H2O.ai to handle a wide range of multimodal tasks. This model, with 2 billion parameters, excels in tasks such as image captioning, visual question answering (VQA), and document understanding, while maintaining efficiency for real-world applications.

The Mississippi-2B model builds on the strong foundations of our H2O-Danube language models, now extended to integrate vision and language tasks. It competes with larger models across various benchmarks, offering a versatile and scalable solution for document AI, OCR, and multimodal reasoning.

Key Features:

- 2 Billion Parameters: Balance between performance and efficiency, making it suitable for document processing, OCR, VQA, and more.

- Optimized for Vision-Language Tasks: Achieves high performance across a wide range of applications, including document AI, OCR, and multimodal reasoning.

- Comprehensive Dataset: Trained on 17M image-text pairs, ensuring broad coverage and strong task generalization.

Benchmarks

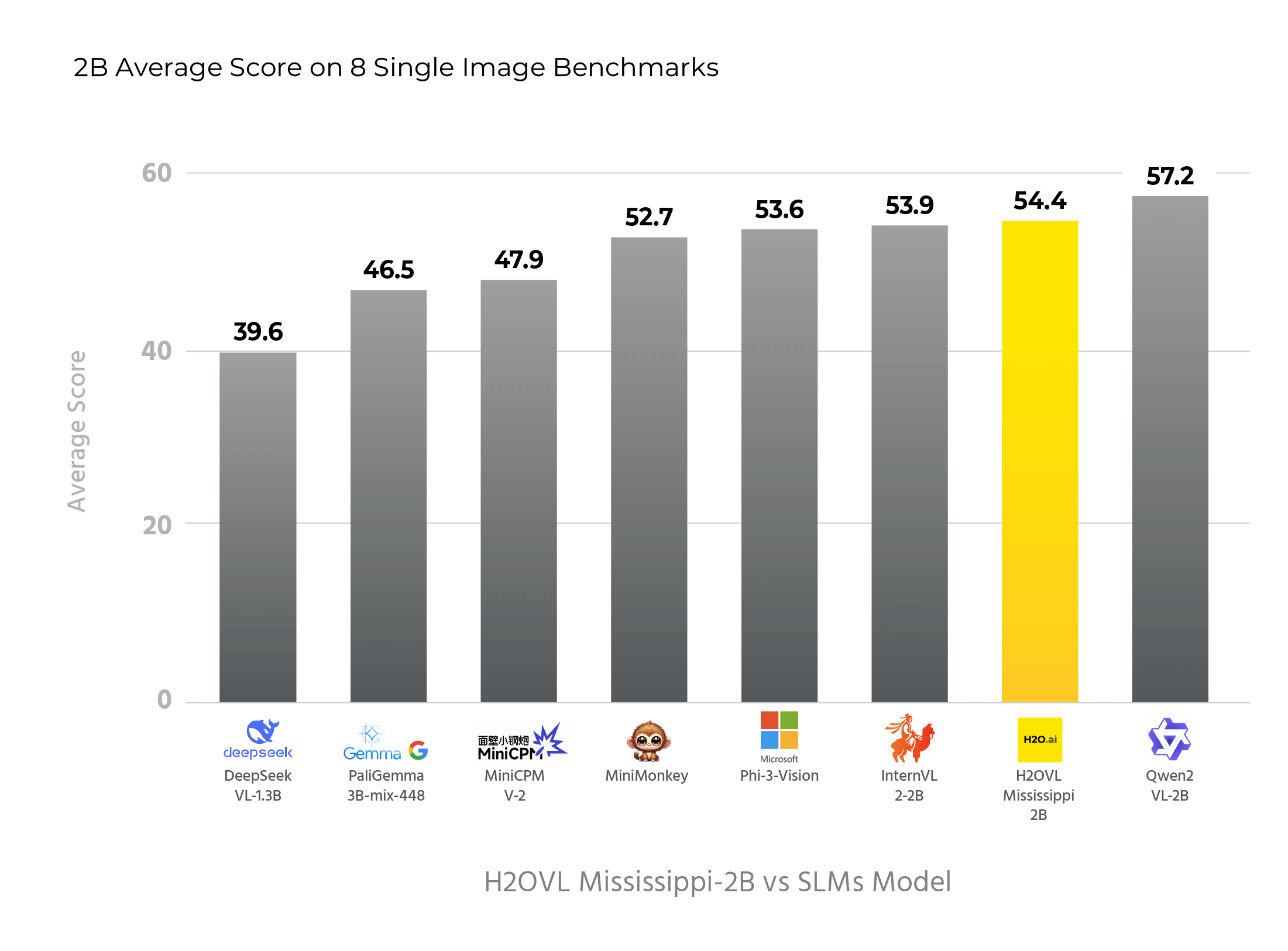

Performance Comparison of Similar Sized Models Across Multiple Benchmarks - OpenVLM Leaderboard

| Models | Params (B) | Avg. Score | MMBench | MMStar | MMMUVAL | Math Vista | Hallusion | AI2DTEST | OCRBench | MMVet |

|---|---|---|---|---|---|---|---|---|---|---|

| Qwen2-VL-2B | 2.1 | 57.2 | 72.2 | 47.5 | 42.2 | 47.8 | 42.4 | 74.7 | 797 | 51.5 |

| H2OVL-Mississippi-2B | 2.1 | 54.4 | 64.8 | 49.6 | 35.2 | 56.8 | 36.4 | 69.9 | 782 | 44.7 |

| InternVL2-2B | 2.1 | 53.9 | 69.6 | 49.8 | 36.3 | 46.0 | 38.0 | 74.1 | 781 | 39.7 |

| Phi-3-Vision | 4.2 | 53.6 | 65.2 | 47.7 | 46.1 | 44.6 | 39.0 | 78.4 | 637 | 44.1 |

| MiniMonkey | 2.2 | 52.7 | 68.9 | 48.1 | 35.7 | 45.3 | 30.9 | 73.7 | 794 | 39.8 |

| MiniCPM-V-2 | 2.8 | 47.9 | 65.8 | 39.1 | 38.2 | 39.8 | 36.1 | 62.9 | 605 | 41.0 |

| InternVL2-1B | 0.8 | 48.3 | 59.7 | 45.6 | 36.7 | 39.4 | 34.3 | 63.8 | 755 | 31.5 |

| PaliGemma-3B-mix-448 | 2.9 | 46.5 | 65.6 | 48.3 | 34.9 | 28.7 | 32.2 | 68.3 | 614 | 33.1 |

| H2OVL-Mississippi-0.8B | 0.8 | 43.5 | 47.7 | 39.1 | 34.0 | 39.0 | 29.6 | 53.6 | 751 | 30.0 |

| DeepSeek-VL-1.3B | 2.0 | 39.6 | 63.8 | 39.9 | 33.8 | 29.8 | 27.6 | 51.5 | 413 | 29.2 |

Quick Start

We provide an example code to run h2ovl-mississippi-2b using transformers.

Install dependencies:

pip install transformers torch torchvision einops timm peft sentencepiece

If you have ampere GPUs, install flash-attention to speed up inference:

pip install flash_attn

Inference with Transformers:

import torch

from transformers import AutoModel, AutoTokenizer

# Set up the model and tokenizer

model_path = 'h2oai/h2ovl-mississippi-2b'

model = AutoModel.from_pretrained(

model_path,

torch_dtype=torch.bfloat16,

low_cpu_mem_usage=True,

trust_remote_code=True).eval().cuda()

tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True, use_fast=False)

generation_config = dict(max_new_tokens=1024, do_sample=True)

# pure-text conversation

question = 'Hello, who are you?'

response, history = model.chat(tokenizer, None, question, generation_config, history=None, return_history=True)

print(f'User: {question}\nAssistant: {response}')

# Example for single image

image_file = './examples/image1.jpg'

question = '<image>\nPlease describe the image in detail.'

response, history = model.chat(tokenizer, image_file, question, generation_config, history=None, return_history=True)

print(f'User: {question}\nAssistant: {response}')

# Example for multiple images - multiround conversation

image_files = ['./examples/image1.jpg', './examples/image2.jpg']

question = 'Image-1: <image>\nImage-2: <image>\nDescribe the Image-1 and Image-2 in detail.'

response, history = model.chat(tokenizer, image_files, question, generation_config, history=None, return_history=True)

print(f'User: {question}\nAssistant: {response}')

question = 'What are the similarities and differences between these two images.'

response, history = model.chat(tokenizer, image_files, question, generation_config=generation_config, history=history, return_history=True)

print(f'User: {question}\nAssistant: {response}')

Inference with vLLM

h2ovl-mississippi models are also supported by vllm v0.6.4 and later version.

First install vllm

pip install vllm

Offline inference

from vllm import LLM, SamplingParams

from transformers import AutoTokenizer

from PIL import Image

question = "Describe this image in detail"

image = Image.open("assets/a_cat.png")

model_name = "h2oai/h2ovl-mississippi-2b"

llm = LLM(

model=model_name,

)

tokenizer = AutoTokenizer.from_pretrained(model_name,

trust_remote_code=True)

messages = [{'role': 'user', 'content': f"<image>\n{question}"}]

prompt = tokenizer.apply_chat_template(messages,

tokenize=False,

add_generation_prompt=True)

# Stop tokens for H2OVL-Mississippi

# https://huggingface.co/h2oai/h2ovl-mississippi-2b

stop_token_ids = [tokenizer.eos_token_id]

sampling_params = SamplingParams(n=1,

temperature=0.8,

top_p=0.8,

seed=777, # Seed for reprodicibility

max_tokens=1024,

stop_token_ids=stop_token_ids)

# Single prompt inference

outputs = llm.generate({

"prompt": prompt,

"multi_modal_data": {"image": image},

},

sampling_params=sampling_params)

# look at the output

for o in outputs:

generated_text = o.outputs[0].text

print(generated_text)

Pleaes see more examples at https://docs.vllm.ai/en/latest/models/vlm.html#offline-inference

Online inference with OpenAI-Compatible Vision API

Run the following command to start the vLLM server with the h2ovl-mississippi-2b model:

vllm serve h2oai/h2ovl-mississippi-2b --dtype auto --api-key token-abc123

from openai import OpenAI

client = OpenAI(

base_url="http://0.0.0.0:8000/v1",

api_key="token-abc123",

)

# check the model name

model_name = client.models.list().data[0].id

print(model_name)

# use chat completion api

response = client.chat.completions.create(

model=model_name,

messages=[{

'role':

'user',

'content': [{

'type': 'text',

'text': 'describe this image in detail',

}, {

'type': 'image_url',

'image_url': {

'url':

# an image example from https://galaxyofai.com/opencv-with-python-full-tutorial-for-data-science/

# this is a cat

'https://galaxyofai.com/wp-content/uploads/2023/04/image-42.png',

},

}],

}],

temperature=0.8,

top_p=0.8)

print(response)

Please see more examples at https://docs.vllm.ai/en/latest/models/vlm.html#online-inference

Prompt Engineering for JSON Extraction

Overview

This guide demonstrates how to create prompts for extracting information and converting it into structured JSON outputs. It starts with basic examples and progresses to more complex JSON structures, including handling data from images of tables and charts. The objective is to help users design effective prompts that can be used in various applications, such as natural language processing, chatbots, or data extraction from visual inputs.

Table of Contents

- Getting Started

- Extracting Simple Information

- Extracting Nested Information

- Extracting Lists and Arrays

- Extracting Tables

- Extracting Charts

- Best Practices

Getting Started

To get started with JSON extraction from images, it's essential to have a clear understanding of the visual content you want to extract and the structure of the desired JSON output. The following examples will guide you through crafting prompts to achieve this.

Example 1: Extracting Simple Information from an Image

Hypothetical Scenario: You have an image of a form that contains basic details like "Name," "Date of Birth," and "Address."

Prompt:

Extract the details from the form image and structure them into JSON format:

{

"name": "",

"date_of_birth": "",

"address": ""

}

Expected Output:

{

"name": "John Doe",

"date_of_birth": "1990-01-01",

"address": "1234 Elm Street, Springfield"

}

Example 2: Extracting Nested Information from an Image

Hypothetical Scenario: You have an image of a form that contains detailed personal information, including contact details and emergency contacts.

Prompt:

Extract the information from the form and format it as follows:

{

"personal_details": {

"name": "",

"age": 0,

"gender": ""

},

"contact": {

"phone": "",

"email": ""

},

"emergency_contact": {

"name": "",

"relation": "",

"phone": ""

}

}

Expected Output:

{

"personal_details": {

"name": "Sarah Connor",

"age": 35,

"gender": "Female"

},

"contact": {

"phone": "555-1234",

"email": "[email protected]"

},

"emergency_contact": {

"name": "Kyle Reese",

"relation": "Friend",

"phone": "555-5678"

}

}

Example 3: Extracting Lists and Arrays from an Image

Hypothetical Scenario: You have an image of a schedule that lists several events, their times, and locations.

Prompt:

Extract the event details from the schedule image and structure them into JSON:

{

"events": [

{

"name": "",

"time": "",

"location": ""

}

]

}

Expected Output:

{

"events": [

{

"name": "Morning Meeting",

"time": "09:00 AM",

"location": "Conference Room 1"

},

{

"name": "Lunch Break",

"time": "12:00 PM",

"location": "Cafeteria"

},

{

"name": "Project Update",

"time": "02:00 PM",

"location": "Conference Room 2"

}

]

}

Example 4: Extracting Table Data from an Image

Images of tables often contain structured data that needs to be parsed and converted to JSON. The following example demonstrates how to handle tabular data extraction.

Hypothetical Scenario: You have an image of a table listing product names, prices, and quantities.

Prompt:

Extract the data from the table image and format it as JSON:

{

"products": [

{

"product_name": "",

"price": "",

"quantity": 0

}

]

}

Expected Output:

{

"products": [

{

"product_name": "Apples",

"price": "$2",

"quantity": 10

},

{

"product_name": "Bananas",

"price": "$1",

"quantity": 20

},

{

"product_name": "Oranges",

"price": "$3",

"quantity": 15

}

]

}

Example 5: Extracting Chart Data from an Image

Charts include metadata and data points that need to be accurately extracted. Here's how to structure prompts to extract chart data from images.

Hypothetical Scenario: You have an image of a bar chart that shows monthly sales figures.

Prompt:

Extract the details of the bar chart from the image, including the title, axis labels, and data points and format it as JSON:

{

"chart": {

"title": "",

"x_axis": "",

"y_axis": "",

"data_points": [

{

"label": "",

"value": 0

}

]

}

}

Expected Output:

{

"chart": {

"title": "Monthly Sales Report",

"x_axis": "Months",

"y_axis": "Sales (in $)",

"data_points": [

{

"label": "January",

"value": 500

},

{

"label": "February",

"value": 600

},

{

"label": "March",

"value": 700

}

]

}

}

Best Practices

- Be Explicit: Clearly define the desired keys and structure in your prompt to avoid ambiguity.

- Use Examples: Provide sample outputs so that the system can understand the expected format.

- Anticipate Variations: Consider possible variations in the visual data and ensure the prompt can accommodate them.

- Start Simple: Begin with simple structures, and progressively increase complexity as needed.

- Test and Iterate: Refine your prompts through testing to ensure accuracy and consistency in outputs.

Acknowledgments

We would like to express our gratitude to the InternVL team at OpenGVLab for their research and codebases, upon which we have built and expanded. We also acknowledge the work of the LLaVA team and the Monkey team for their insights and techniques used in improving multimodal models.

Disclaimer

Please read this disclaimer carefully before using the large language model provided in this repository. Your use of the model signifies your agreement to the following terms and conditions.

- Biases and Offensiveness: The large language model is trained on a diverse range of internet text data, which may contain biased, racist, offensive, or otherwise inappropriate content. By using this model, you acknowledge and accept that the generated content may sometimes exhibit biases or produce content that is offensive or inappropriate. The developers of this repository do not endorse, support, or promote any such content or viewpoints.

- Limitations: The large language model is an AI-based tool and not a human. It may produce incorrect, nonsensical, or irrelevant responses. It is the user's responsibility to critically evaluate the generated content and use it at their discretion.

- Use at Your Own Risk: Users of this large language model must assume full responsibility for any consequences that may arise from their use of the tool. The developers and contributors of this repository shall not be held liable for any damages, losses, or harm resulting from the use or misuse of the provided model.

- Ethical Considerations: Users are encouraged to use the large language model responsibly and ethically. By using this model, you agree not to use it for purposes that promote hate speech, discrimination, harassment, or any form of illegal or harmful activities.

- Reporting Issues: If you encounter any biased, offensive, or otherwise inappropriate content generated by the large language model, please report it to the repository maintainers through the provided channels. Your feedback will help improve the model and mitigate potential issues.

- Changes to this Disclaimer: The developers of this repository reserve the right to modify or update this disclaimer at any time without prior notice. It is the user's responsibility to periodically review the disclaimer to stay informed about any changes.

By using the large language model provided in this repository, you agree to accept and comply with the terms and conditions outlined in this disclaimer. If you do not agree with any part of this disclaimer, you should refrain from using the model and any content generated by it.

- Downloads last month

- 71,466