OpenSDI-Flux.1-SigLIP2

OpenSDI-Flux.1-SigLIP2 is a vision-language encoder model fine-tuned from google/siglip2-base-patch16-224 for binary image classification. It is trained to detect whether an image is a real photograph or generated using the Flux.1 generative model, based on the SiglipForImageClassification architecture.

SigLIP 2: Multilingual Vision-Language Encoders with Improved Semantic Understanding, Localization, and Dense Features https://arxiv.org/pdf/2502.14786

OpenSDI: Spotting Diffusion-Generated Images in the Open World https://arxiv.org/pdf/2503.19653, OpenSDI Flux.1 SigLIP2 works best with crisp and high-quality images. Noisy images are not recommended for validation.

If the task is based on image content moderation or AI-generated image vs. real image classification, it is recommended to use this model.

Classification Report:

precision recall f1-score support

Real_Image 0.9108 0.9238 0.9172 10000

Flux.1_Generated 0.9227 0.9095 0.9160 10000

accuracy 0.9166 20000

macro avg 0.9167 0.9166 0.9166 20000

weighted avg 0.9167 0.9166 0.9166 20000

Label Space: 2 Classes

The model classifies an image as either:

Class 0: Real_Image

Class 1: Flux.1_Generated

Install Dependencies

pip install -q transformers torch pillow gradio hf_xet

Inference Code

import gradio as gr

from transformers import AutoImageProcessor, SiglipForImageClassification

from PIL import Image

import torch

# Load model and processor

model_name = "prithivMLmods/OpenSDI-Flux.1-SigLIP2" # Update if needed

model = SiglipForImageClassification.from_pretrained(model_name)

processor = AutoImageProcessor.from_pretrained(model_name)

# Label mapping

id2label = {

"0": "Real_Image",

"1": "Flux.1_Generated"

}

def classify_image(image):

image = Image.fromarray(image).convert("RGB")

inputs = processor(images=image, return_tensors="pt")

with torch.no_grad():

outputs = model(**inputs)

logits = outputs.logits

probs = torch.nn.functional.softmax(logits, dim=1).squeeze().tolist()

prediction = {

id2label[str(i)]: round(probs[i], 3) for i in range(len(probs))

}

return prediction

# Gradio Interface

iface = gr.Interface(

fn=classify_image,

inputs=gr.Image(type="numpy"),

outputs=gr.Label(num_top_classes=2, label="Flux.1 Image Detection"),

title="OpenSDI-Flux.1-SigLIP2",

description="Upload an image to determine whether it is a real photograph or generated by Flux.1."

)

if __name__ == "__main__":

iface.launch()

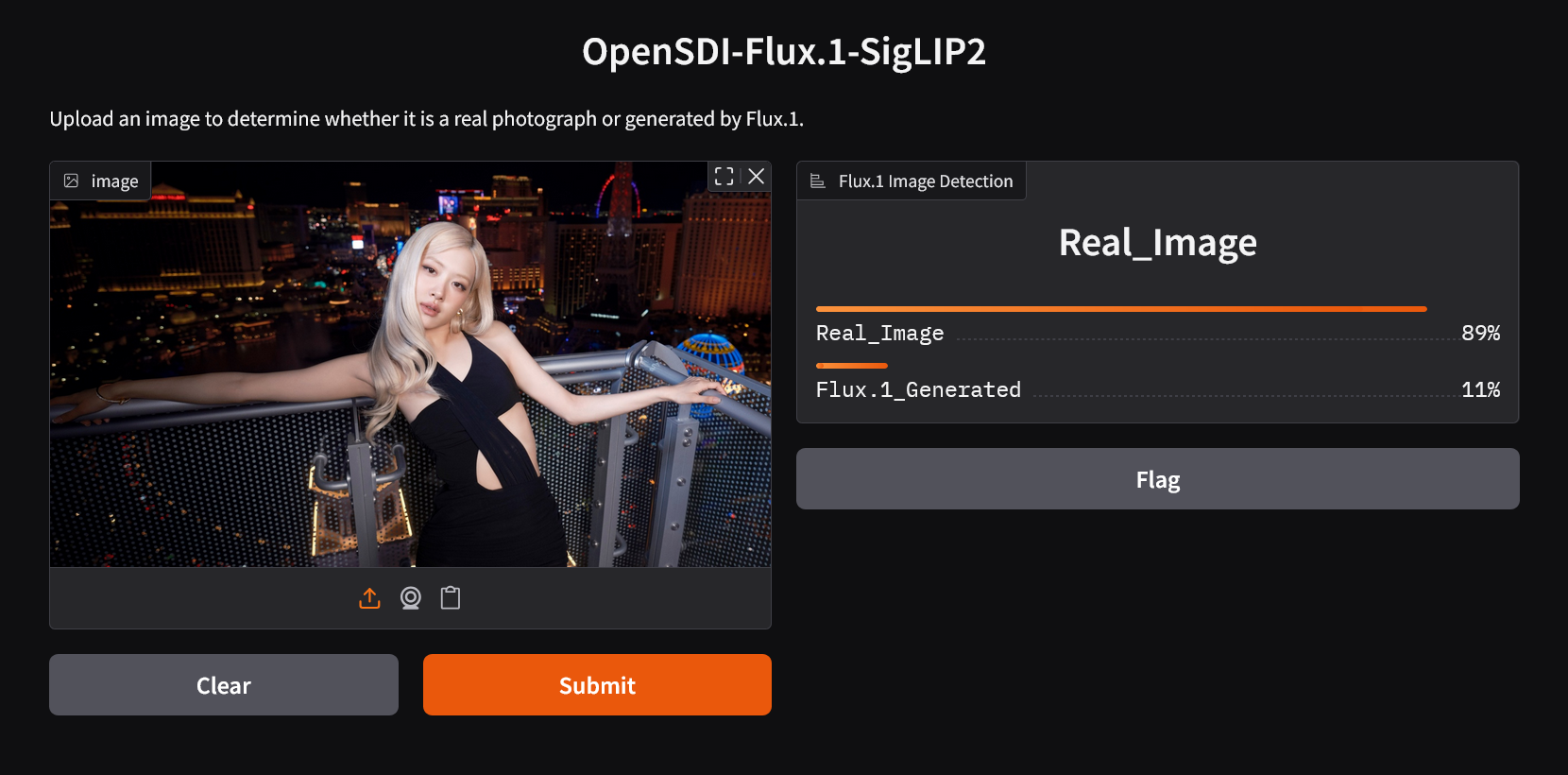

Demo Inference

Flux.1 Generated

Real Image

Intended Use

OpenSDI-Flux.1-SigLIP2 is designed for tasks such as:

- Generative Model Evaluation – Distinguish Flux.1-generated images from real photos for benchmarking and validation.

- Dataset Auditing – Detect synthetic images in real-world datasets to maintain integrity.

- Misinformation Detection – Identify AI-generated visuals in online or news content.

- Media Authentication – Verify whether visual content originates from human-captured or model-generated sources.

- Downloads last month

- 2

Model tree for prithivMLmods/OpenSDI-Flux.1-SigLIP2

Base model

google/siglip2-base-patch16-224