ResNet-3D: Optimized for Mobile Deployment

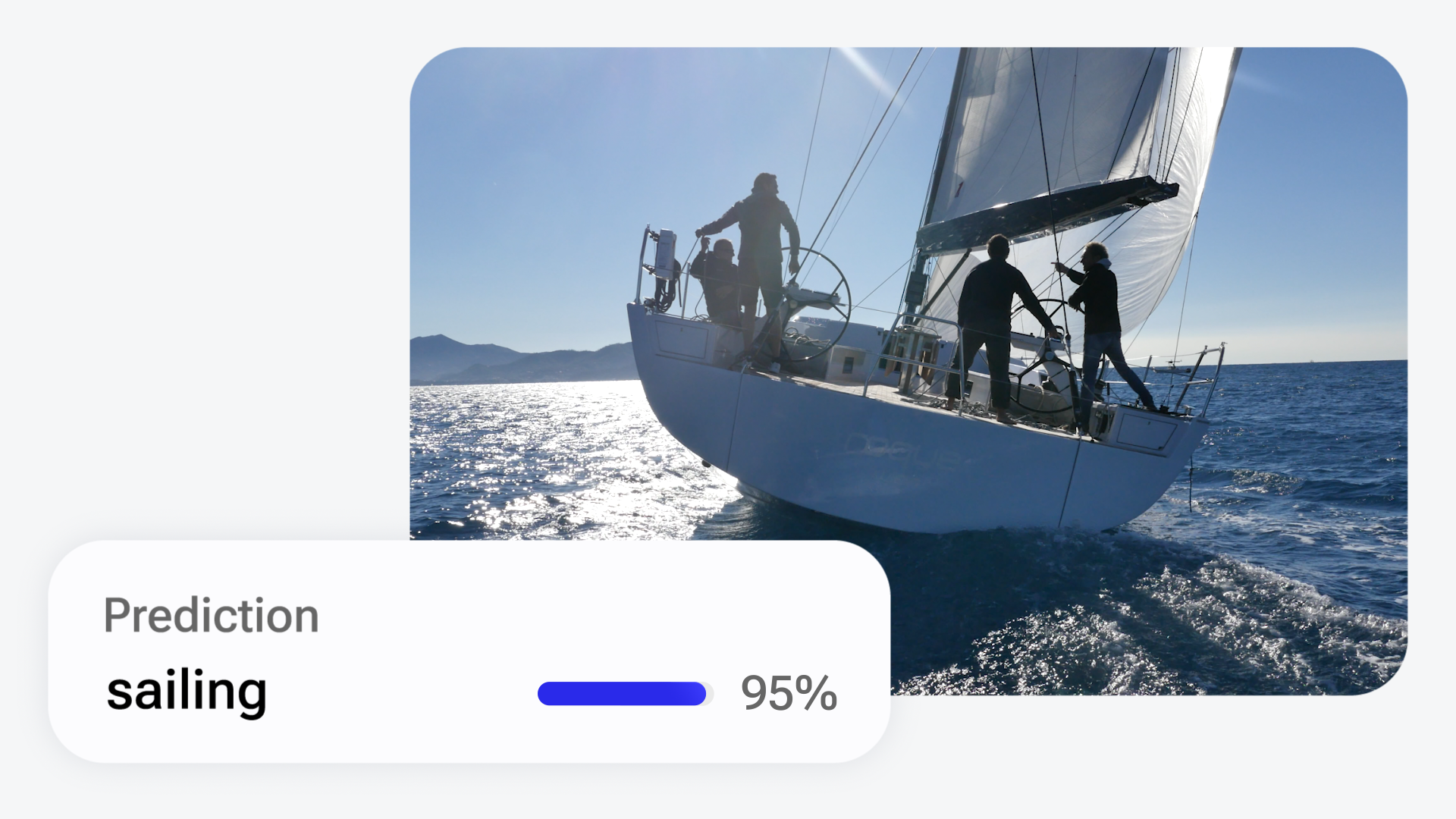

Sports and human action recognition in videos

ResNet 3D is a network with 3D convolutions used for video understanding.

This model is an implementation of ResNet-3D found here.

This repository provides scripts to run ResNet-3D on Qualcomm® devices. More details on model performance across various devices, can be found here.

Model Details

- Model Type: Model_use_case.video_classification

- Model Stats:

- Model checkpoint: Kinetics-400

- Input resolution: 112x112

- Number of parameters: 33.4M

- Model size (float): 127 MB

- Model size (w8a8): 32.1 MB

| Model | Precision | Device | Chipset | Target Runtime | Inference Time (ms) | Peak Memory Range (MB) | Primary Compute Unit | Target Model |

|---|---|---|---|---|---|---|---|---|

| ResNet-3D | float | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | TFLITE | 109.791 ms | 29 - 71 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | QNN_DLC | 93.682 ms | 0 - 66 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | TFLITE | 34.821 ms | 29 - 72 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | QNN_DLC | 28.396 ms | 2 - 65 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | TFLITE | 21.807 ms | 3 - 1032 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | QNN_DLC | 14.861 ms | 2 - 22 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | TFLITE | 36.144 ms | 29 - 71 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | QNN_DLC | 26.0 ms | 2 - 57 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | SA7255P ADP | Qualcomm® SA7255P | TFLITE | 109.791 ms | 29 - 71 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | SA7255P ADP | Qualcomm® SA7255P | QNN_DLC | 93.682 ms | 0 - 66 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | SA8255 (Proxy) | Qualcomm® SA8255P (Proxy) | TFLITE | 22.768 ms | 1 - 1032 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | SA8255 (Proxy) | Qualcomm® SA8255P (Proxy) | QNN_DLC | 14.907 ms | 2 - 23 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | SA8295P ADP | Qualcomm® SA8295P | TFLITE | 38.657 ms | 29 - 58 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | SA8295P ADP | Qualcomm® SA8295P | QNN_DLC | 27.23 ms | 2 - 51 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | SA8650 (Proxy) | Qualcomm® SA8650P (Proxy) | TFLITE | 22.141 ms | 0 - 1044 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | SA8650 (Proxy) | Qualcomm® SA8650P (Proxy) | QNN_DLC | 14.872 ms | 2 - 28 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | SA8775P ADP | Qualcomm® SA8775P | TFLITE | 36.144 ms | 29 - 71 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | SA8775P ADP | Qualcomm® SA8775P | QNN_DLC | 26.0 ms | 2 - 57 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | TFLITE | 22.376 ms | 2 - 1030 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | QNN_DLC | 15.027 ms | 2 - 24 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | ONNX | 13.642 ms | 0 - 207 MB | NPU | ResNet-3D.onnx |

| ResNet-3D | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | TFLITE | 16.401 ms | 28 - 85 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | QNN_DLC | 10.802 ms | 2 - 71 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | ONNX | 10.705 ms | 2 - 73 MB | NPU | ResNet-3D.onnx |

| ResNet-3D | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | TFLITE | 17.428 ms | 27 - 67 MB | NPU | ResNet-3D.tflite |

| ResNet-3D | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | QNN_DLC | 9.932 ms | 2 - 63 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | ONNX | 10.005 ms | 2 - 55 MB | NPU | ResNet-3D.onnx |

| ResNet-3D | float | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN_DLC | 15.739 ms | 1018 - 1018 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | float | Snapdragon X Elite CRD | Snapdragon® X Elite | ONNX | 14.52 ms | 63 - 63 MB | NPU | ResNet-3D.onnx |

| ResNet-3D | w8a8 | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | QNN_DLC | 13.387 ms | 0 - 31 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | QNN_DLC | 6.079 ms | 1 - 80 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | QNN_DLC | 4.025 ms | 0 - 11 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | QNN_DLC | 4.447 ms | 1 - 33 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | RB3 Gen 2 (Proxy) | Qualcomm® QCS6490 (Proxy) | QNN_DLC | 31.537 ms | 1 - 51 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | SA7255P ADP | Qualcomm® SA7255P | QNN_DLC | 13.387 ms | 0 - 31 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | SA8255 (Proxy) | Qualcomm® SA8255P (Proxy) | QNN_DLC | 4.022 ms | 1 - 14 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | SA8295P ADP | Qualcomm® SA8295P | QNN_DLC | 7.484 ms | 1 - 34 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | SA8650 (Proxy) | Qualcomm® SA8650P (Proxy) | QNN_DLC | 4.026 ms | 0 - 11 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | SA8775P ADP | Qualcomm® SA8775P | QNN_DLC | 4.447 ms | 1 - 33 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | QNN_DLC | 4.006 ms | 1 - 12 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | ONNX | 3.944 ms | 0 - 42 MB | NPU | ResNet-3D.onnx |

| ResNet-3D | w8a8 | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | QNN_DLC | 2.935 ms | 1 - 76 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | ONNX | 2.915 ms | 0 - 86 MB | NPU | ResNet-3D.onnx |

| ResNet-3D | w8a8 | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | QNN_DLC | 2.783 ms | 1 - 38 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | ONNX | 2.804 ms | 0 - 41 MB | NPU | ResNet-3D.onnx |

| ResNet-3D | w8a8 | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN_DLC | 5.047 ms | 382 - 382 MB | NPU | ResNet-3D.dlc |

| ResNet-3D | w8a8 | Snapdragon X Elite CRD | Snapdragon® X Elite | ONNX | 4.378 ms | 33 - 33 MB | NPU | ResNet-3D.onnx |

Installation

Install the package via pip:

pip install "qai-hub-models[resnet-3d]"

Configure Qualcomm® AI Hub to run this model on a cloud-hosted device

Sign-in to Qualcomm® AI Hub with your

Qualcomm® ID. Once signed in navigate to Account -> Settings -> API Token.

With this API token, you can configure your client to run models on the cloud hosted devices.

qai-hub configure --api_token API_TOKEN

Navigate to docs for more information.

Demo off target

The package contains a simple end-to-end demo that downloads pre-trained weights and runs this model on a sample input.

python -m qai_hub_models.models.resnet_3d.demo

The above demo runs a reference implementation of pre-processing, model inference, and post processing.

NOTE: If you want running in a Jupyter Notebook or Google Colab like environment, please add the following to your cell (instead of the above).

%run -m qai_hub_models.models.resnet_3d.demo

Run model on a cloud-hosted device

In addition to the demo, you can also run the model on a cloud-hosted Qualcomm® device. This script does the following:

- Performance check on-device on a cloud-hosted device

- Downloads compiled assets that can be deployed on-device for Android.

- Accuracy check between PyTorch and on-device outputs.

python -m qai_hub_models.models.resnet_3d.export

Profiling Results

------------------------------------------------------------

ResNet-3D

Device : cs_8275 (ANDROID 14)

Runtime : TFLITE

Estimated inference time (ms) : 109.8

Estimated peak memory usage (MB): [29, 71]

Total # Ops : 57

Compute Unit(s) : npu (53 ops) gpu (0 ops) cpu (4 ops)

How does this work?

This export script leverages Qualcomm® AI Hub to optimize, validate, and deploy this model on-device. Lets go through each step below in detail:

Step 1: Compile model for on-device deployment

To compile a PyTorch model for on-device deployment, we first trace the model

in memory using the jit.trace and then call the submit_compile_job API.

import torch

import qai_hub as hub

from qai_hub_models.models.resnet_3d import Model

# Load the model

torch_model = Model.from_pretrained()

# Device

device = hub.Device("Samsung Galaxy S24")

# Trace model

input_shape = torch_model.get_input_spec()

sample_inputs = torch_model.sample_inputs()

pt_model = torch.jit.trace(torch_model, [torch.tensor(data[0]) for _, data in sample_inputs.items()])

# Compile model on a specific device

compile_job = hub.submit_compile_job(

model=pt_model,

device=device,

input_specs=torch_model.get_input_spec(),

)

# Get target model to run on-device

target_model = compile_job.get_target_model()

Step 2: Performance profiling on cloud-hosted device

After compiling models from step 1. Models can be profiled model on-device using the

target_model. Note that this scripts runs the model on a device automatically

provisioned in the cloud. Once the job is submitted, you can navigate to a

provided job URL to view a variety of on-device performance metrics.

profile_job = hub.submit_profile_job(

model=target_model,

device=device,

)

Step 3: Verify on-device accuracy

To verify the accuracy of the model on-device, you can run on-device inference on sample input data on the same cloud hosted device.

input_data = torch_model.sample_inputs()

inference_job = hub.submit_inference_job(

model=target_model,

device=device,

inputs=input_data,

)

on_device_output = inference_job.download_output_data()

With the output of the model, you can compute like PSNR, relative errors or spot check the output with expected output.

Note: This on-device profiling and inference requires access to Qualcomm® AI Hub. Sign up for access.

Deploying compiled model to Android

The models can be deployed using multiple runtimes:

TensorFlow Lite (

.tfliteexport): This tutorial provides a guide to deploy the .tflite model in an Android application.QNN (

.soexport ): This sample app provides instructions on how to use the.soshared library in an Android application.

View on Qualcomm® AI Hub

Get more details on ResNet-3D's performance across various devices here. Explore all available models on Qualcomm® AI Hub

License

- The license for the original implementation of ResNet-3D can be found here.

- The license for the compiled assets for on-device deployment can be found here

References

Community

- Join our AI Hub Slack community to collaborate, post questions and learn more about on-device AI.

- For questions or feedback please reach out to us.

- Downloads last month

- 34